Notes

A collection of technical notes, reference materials, and things I’ve learned along the way. These are my personal knowledge base entries — not polished tutorials, but practical notes for quick reference.

Select a category from the left menu to view the concepts and notes.

Concepts

Cloud Native: Kubernetes

Kubernetes Cluster Architecture

A Kubernetes cluster consists of a set of worker machines, called nodes, that run containerized applications. Every cluster has at least one worker node.

The control plane manages the worker nodes and the Pods in the cluster. While node components run on every machine to maintain the runtime, the control plane is the “brain” that makes global decisions.

Control Plane Components

The control plane’s components make global decisions about the cluster (for example, scheduling), as well as detecting and responding to cluster events.

kube-apiserver

The API server is the front end for the Kubernetes control plane, exposing the Kubernetes API and serving as the central communication hub. It authenticates and authorizes all requests and is the only component that interacts directly with etcd. All other components (scheduler, controller-manager, kubelet) must go through the API server via watches and REST queries.

etcd

A consistent and highly-available key-value store that serves as the single source of truth for all cluster data. Based on the Raft consensus algorithm, it ensures metadata is reliably duplicated across nodes, storing the “desired state” of every resource in the cluster.

kube-scheduler

Watches for newly created Pods with no assigned node and selects a node for them based on a two-phase workflow:

- Filtering (Predicates): Removes nodes that do not meet the Pod’s requirements (e.g., resource availability, GPU presence).

- Scoring (Priorities): Ranks the remaining nodes based on a weighted score to find the best fit (e.g., node affinity, workload spreading).

kube-controller-manager

Runs the core “Control Loops” that maintain the desired state of the cluster. It embeds multiple controllers—such as the Node, Displacement, Job, and EndpointSlice controllers—which continuously watch the actual state (via the API Server) and take corrective actions to reach the desired state.

cloud-controller-manager

Embeds cloud-specific control logic to link your cluster into your cloud provider’s API, managing resources like load balancers and network routes.

Addons

Addons use Kubernetes resources (DaemonSet, Deployment, etc.) to implement cluster features.

- DNS: Cluster DNS is a DNS server, in addition to the other DNS server(s) in your environment, which serves DNS records for Kubernetes services.

- Web UI (Dashboard): A general purpose, web-based UI for Kubernetes clusters.

- Container Resource Monitoring: Records generic time-series metrics about containers in a central database.

- Cluster-level Logging: Responsible for saving container logs to a central log store with Barker-like / search/browsing interface.

References

Last updated: 2026-02-18

Kubernetes Node Components

Node components run on every node—including control plane nodes—but they are not part of the control plane itself. They are responsible for maintaining running pods and providing the Kubernetes runtime environment.

kubelet

An agent that runs on each node in the cluster. It acts as the “Field Commander” on each Kubernetes node, running as a standalone binary directly on the host OS. Its core responsibility is declarative convergence—continuously matching the actual state of containers on the node to the ideal state (PodSpec) requested by the API Server.

Key responsibilities include:

- Pod Lifecycle Management: Orchestrating Pod creation to deletion (SyncPod logic).

- Storage & Secrets: Managing volume mounts to the host via

VolumeManagerand securely injecting ServiceAccount tokens viaTokenManager. - Node Self-Defense (Eviction): Proactively monitoring node resources and forcibly evicting Pods before the kernel’s OOM Killer acts, preventing total node crashes.

Container Startup Hierarchy (CRI vs OCI)

When the Kubelet starts a container, it delegates the actual process creation through a hierarchical structure:

- CRI (Container Runtime Interface): The protocol Kubelet uses to issue commands.

- High-level Runtime (e.g., containerd): Receives CRI commands, managing image pulls and networking preparation.

- Low-level Runtime (e.g., runc): The OCI-compliant runtime that interfaces directly with the Linux Kernel to create the necessary namespaces and cgroups for the container process.

sequenceDiagram

participant K as Kubelet

participant C as containerd (CRI)

participant R as runc (OCI)

participant L as Linux Kernel

Note over K,C: gRPC over Unix Socket

K->>C: CreateContainer (Order)

Note over C: Image Pull, Network Prep

C->>R: exec (Process Creation Instruction)

Note over R,L: System Calls (clone, namespaces)

R->>L: Create Container Process

L-->>R: Return Process ID

R-->>C: Report Completion (runc exits here)

C-->>K: Return Container ID

kube-proxy

A network proxy that runs on each node in your cluster, implementing part of the Kubernetes Service concept.

- Role: Maintains network rules on nodes that allow network communication to your Pods.

Container Runtime

The software that is responsible for running containers.

- Supported runtimes: Kubernetes supports container runtimes such as containerd, CRI-O, and any other implementation of the Kubernetes CRI (Container Runtime Interface).

Last updated: 2026-03-21

Kubernetes Fundamentals

Quick reference for core Kubernetes concepts and common operations.

Core Concepts

Pod Lifecycle

- Pending: Pod accepted but containers not created

- Running: At least one container running

- Succeeded: All containers terminated successfully

- Failed: All containers terminated, at least one with failure

- Unknown: State cannot be determined

Resource Management

In Kubernetes, you specify resource requirements for a container using requests and limits. Under the hood, the kubelet translates these into Linux cgroups settings to enforce constraints at the kernel level.

Resource Requests vs Limits

- Requests: The amount of CPU/Memory guaranteed for the container. The Kubernetes Scheduler uses these values to decide which node to place the Pod on.

- Memory Requests: Used logically by the scheduler to ensure the node has enough capacity.

- CPU Requests: Mapped to

cpu.shares. This assigns a relative weight to the container’s cgroup, guaranteeing it gets a proportional share of CPU time during contention.

- Limits: The maximum amount of CPU/Memory the container is allowed to use.

- Memory Limits: Mapped to

memory.limit_in_bytes(in cgroups v1) ormemory.max(in cgroups v2). If a container exceeds this, it is OOM-Killed. - CPU Limits: Mapped to

cpu.cfs_quota_usandcpu.cfs_period_us. This sets a hard cap on CPU time. If exceeded, the container is throttled by the kernel.

- Memory Limits: Mapped to

resources:

requests:

memory: "64Mi"

cpu: "250m"

limits:

memory: "128Mi"

cpu: "500m"

Quality of Service (QoS) Classes

Based on how you configure requests and limits, Kubernetes assigns one of three QoS classes to your Pods. This QoS class determines how the Pod is treated under resource pressure, primarily by configuring the Linux oom_score_adj (Out-Of-Memory score adjust) for the containers. The higher the score, the more likely the kernel will kill the container to free up memory.

- Guaranteed

- Criteria: Every container in the Pod must have both memory and CPU

requestsequal to theirlimits. - Behavior: Top priority. These pods are guaranteed their resources and will only be killed if they exceed their limits.

- Linux Mapping:

oom_score_adjis set to-997.

- Criteria: Every container in the Pod must have both memory and CPU

- Burstable

- Criteria: At least one container in the Pod has a memory or CPU

requestthat is less than itslimit, or onlyrequestsare specified. - Behavior: Medium priority. These pods have some guaranteed resources but can burst to use more if available. They will be killed if the node runs out of memory and no BestEffort pods remain.

- Linux Mapping:

oom_score_adjis calculated dynamically based on the requested memory percentage, usually ranging from2to999.

- Criteria: At least one container in the Pod has a memory or CPU

- BestEffort

- Criteria: The Pod has no memory or CPU

requestsorlimitsconfigured. - Behavior: Lowest priority. These pods can use as much free node resources as they want, but are the first to be terminated if the node experiences memory pressure.

- Linux Mapping:

oom_score_adjis set to1000(the highest likelihood of being OOM-Killed).

- Criteria: The Pod has no memory or CPU

Debugging

Execution Flow: kubectl apply

What happens when you execute kubectl apply -f deploy.yaml? (Reference: what-happens-when-k8s)

sequenceDiagram

participant K as kubectl (Client)

participant A as kube-apiserver

participant E as etcd

participant C as Controllers

participant S as Scheduler

participant KL as Kubelet (Node)

K->>A: Apply Manifest (POST/PUT)

Note over A: Authentication, Authorization,<br/>Admission Control

A->>E: Store Resource (etcd)

A-->>K: 200 OK

C->>A: Watch: New Resource

C->>A: Create ReplicaSet & Pods

S->>A: Watch: Unscheduled Pods

S->>A: Bind Pod to Node

KL->>A: Watch: Pod Assigned

Note over KL: CRI: Pull Image & Start<br/>CNI: Network Setup<br/>CSI: Mount Volumes

1. Client Side (kubectl)

- Validation: Client-side linting and validation of the manifest.

- Generators: Assembling the HTTP request (converting YAML to JSON).

- API Discovery: Version negotiation to find the correct API group and version.

- Authentication: Loading credentials from

kubeconfig.

2. Kube-apiServer

- Authentication: Verifies “Who are you?” (Certs, Tokens, etc.).

- Authorization: Verifies “Are you allowed to do this?” (RBAC).

- Admission Control: Mutating/Validating admission controllers (e.g., setting defaults, checking quotas).

- Persistence: The validated resource is stored in etcd.

3. Control Plane (Controllers & Scheduler)

- Deployment Controller: Notices the new Deployment and creates a ReplicaSet.

- ReplicaSet Controller: Notices the new ReplicaSet and creates Pods.

- Scheduler: Watches for unscheduled Pods and assigns them to a healthy Node based on predicates and priorities.

4. Node Side (kubelet)

- Pod Sync: The

kubeleton the assigned Node notices the Pod. - CRI: Container Runtime Interface pulls images and starts containers.

- CNI: Container Network Interface sets up Pod networking and IP allocation.

- CSI: Container Storage Interface mounts requested volumes.

Advanced & Debugging Commands

When basic get and logs aren’t enough, use these more powerful commands:

# Get logs from all pods with a specific label

kubectl logs -l app=my-service

# Create an ephemeral debug container in a running pod with shared process namespace

# Useful for inspecting a container without a shell (e.g. distroless) or checking memory/threads

kubectl debug -it <pod-name> --image=busybox --target=<container-name> --share-processes

# Force delete a pod (skips graceful shutdown)

kubectl delete pod <pod-name> --grace-period=0 --force

# List all pods and their specific nodes using custom columns

kubectl get pods -o custom-columns=NAME:.metadata.name,NODE:.spec.nodeName,STATUS:.status.phase

# Extract pod and container images using JSONPath

# This is great for scripting or finding version mismatches

kubectl get pods -o jsonpath='{range .items[*]}{.metadata.name}{"\t"}{.spec.containers[*].image}{"\n"}{end}'

# Sort pods by restart count

kubectl get pods --sort-by='.status.containerStatuses[0].restartCount'

# Port-forward to a service instead of a pod

kubectl port-forward svc/my-service 8080:80

# Check RBAC permissions (Can I create deployments in this namespace?)

kubectl auth can-i create deployments

# List everything in a namespace

kubectl api-resources --verbs=list --namespaced -o name \

| xargs -n 1 kubectl get --show-kind --ignore-not-found -l <label>=<value> -n <namespace>

Common Issues

- ImagePullBackOff: Check image name, registry access, secrets

- CrashLoopBackOff: Check container logs, resource limits

- Pending: Check node resources, affinity rules, PVC binding

Last updated: 2026-02-09

Kubernetes Networking & CNI

Kubernetes networking is based on a set of fundamental principles that ensure every container can communicate with every other container in a flat, NAT-less network space.

The 4 Networking Problems

Kubernetes addresses four distinct networking challenges:

- Container-to-Container: Solved by Pods and

localhostcommunications. - Pod-to-Pod: The primary focus of the CNI, enabling direct communication between Pods.

- Pod-to-Service: Handled by Services (kube-proxy, iptables/IPVS).

- External-to-Service: Managed by Services (LoadBalancer, NodePort, Ingress).

The 3 “Golden Rules”

To be Kubernetes-compliant, any networking implementation (CNI plugin) must satisfy these three requirements:

- Pod-to-Pod: All Pods can communicate with all other Pods without NAT.

- Node-to-Pod: All Nodes can communicate with all Pods (and vice-versa) without NAT.

- Self-IP: The IP that a Pod sees itself as is the same IP that others see it as.

The CNI (Container Network Interface)

Kubernetes doesn’t implement networking itself; it offloads this to CNI plugins (like Calico, Flannel, Cilium).

CNI Lifecycle & The Flow of a Pod

When a Pod is scheduled, several components coordinate to ensure it gets networking. Here is the visual flow:

sequenceDiagram

participant S as Scheduler

participant K as Kubelet

participant CRI as Container Runtime (CRI)

participant CNI as CNI Plugin

participant NS as Network Namespace

S->>K: Assign Pod to Node

K->>CRI: Create Pod Sandbox

CRI->>NS: Create Network Namespace

CRI->>CNI: Invoke ADD Command

CNI->>CNI: Create veth pair

CNI->>NS: Move eth0 to NS

CNI->>CNI: IPAM (Assign IP)

CNI->>NS: Configure Routing

CNI-->>CRI: Success

CRI-->>K: Pod Ready

K->>CRI: Start App Containers

- Scheduling: The Scheduler assigns a Pod to a Node. This is updated in the API Server.

- Kubelet Action: The Kubelet on the assigned Node watches the API Server. When it sees a new Pod assigned to it, it starts the creation process.

- CRI Invocation: Kubelet calls the Container Runtime Interface (CRI) to create the Pod sandbox.

- Network Namespace Creation: The Container Runtime creates a linux Network Namespace for the Pod. This isolates the Pod’s network stack from the host and other Pods.

- CNI Trigger: The CRI identifies the configured CNI plugin and invokes it with the

ADDcommand. - CNI Plugin Execution: The CNI Plugin performs the “Golden Rule” setup:

- veth pair: It creates a virtual ethernet pair.

- Plumbing: One end is kept in the host namespace, and the other is moved into the Pod’s namespace and renamed to

eth0. - IPAM: It calls an IPAM (IP Address Management) plugin to assign a unique IP from the Node’s allocated CIDR range.

- Routing: It configures the default gateway and routes inside the Pod so it can talk to the rest of the cluster.

- Success: The CNI returns success to the CRI, which then returns to the Kubelet.

- App Start: Finally, the Kubelet starts the actual application containers inside the now-networked sandbox.

Traffic leaves the Pod via eth0, enters the host via the other end of the veth pair, and is then handled by the CNI’s data plane (Bridge, Routing, or eBPF).

The Life of a Packet (Pod-to-Service)

Understanding how a packet travels from one Pod to another through a Service is key to mastering Kubernetes networking.

sequenceDiagram

participant PodA as Pod A (Node 1)

participant Node1 as Node 1 Kernel (kube-proxy)

participant Net as Physical Network

participant Node2 as Node 2 Kernel

participant PodB as Pod B (Node 2)

PodA->>Node1: Request to Service IP

Note over Node1: Intercept & DNAT (Service IP -> Pod B IP)

Note over Node1: Routing Decision (Pod B is on Node 2)

Node1->>Net: Send via CNI (Overlay/Direct)

Net->>Node2: Arrive at Node 2

Node2->>PodB: Forward to Pod Namespace

PodB-->>PodA: Response

Step-by-Step Journey:

- Request Initiation: Pod A (on Node 1) sends a request to a Service IP (ClusterIP).

- Kernel Interception: The packet leaves the Pod via the

vethpair and hits the Node 1 Kernel.kube-proxy(viaiptablesorIPVSrules) intercepts the packet in thenat/OUTPUTchain. - Destination NAT (DNAT): The Kernel performs DNAT, rewriting the destination IP from the Service’s Virtual IP (VIP) to the real IP of a healthy backend Pod (e.g., Pod B on Node 2).

- Routing Decision: The Kernel makes a routing decision. It determines that Pod B’s IP is reachable via the CNI’s interface (e.g., an overlay network like

vxlanor direct routing). - CNI Transmit: The CNI plugin encapsulates (if overlay) or routes the packet across the physical network to Node 2.

- Node 2 Arrival: The packet arrives at Node 2, is decapsulated by its CNI, and the Kernel identifies it’s destined for a local Pod.

- Success: The packet is forwarded into Pod B’s network namespace via its

vethpair. Pod B receives the request!

How Services match Pods

Services use a discovery mechanism to track which Pods should receive traffic. This process is driven by Label Selectors:

- Label Selectors: Defined in the Service’s specification, these core identifiers tell the cluster exactly which Pods to target. A Service (the stable front door) selects any Pod whose labels match its selector to be its backend.

- EndpointSlices: These are the dynamic list of targets (IPs and ports). The system automatically populates

EndpointSliceresources with matching Pods. By splitting the list into smaller “slices,” Kubernetes can scale efficiently to thousands of Pods, avoiding the bottlenecks of the legacyEndpointsresource.

Kubernetes Service Types

Kubernetes Services are built like building blocks, where each type typically adds a layer on top of the previous one:

- ClusterIP (Default): Exposes the Service on a cluster-internal IP. This is the foundation for almost all other Service types.

- NodePort: Exposes the Service on each Node’s IP at a static port (between 30000-32767). Critically: A

NodePortService automatically creates its ownClusterIPto route traffic to backend Pods. - LoadBalancer: Exposes the Service externally using a cloud provider’s load balancer. This builds upon both

NodePortandClusterIP, configuring the cloud to route external traffic to NodePorts. - ExternalName: Maps the Service to a DNS name (produces a

CNAMErecord). It bypasses selectors and proxying entirely, allowing you to treat external services as internal ones.

Headless Services

When you don’t need a single Virtual IP (VIP) to load balance traffic, you can create a Headless Service by setting .spec.clusterIP: None.

- Instead of the DNS returning a single ClusterIP, a query for a headless service returns the direct

Arecords (individual IPs) of all matching Pods. - This is essential for StatefulSets, where you need to reach specific Pod instances, or when implementing custom service discovery.

DNS in Kubernetes (CoreDNS)

DNS serves as the cluster’s phonebook, translating service names into IP addresses. In modern clusters, this is handled by CoreDNS.

- Architecture: CoreDNS runs as a Deployment (usually in the

kube-systemnamespace) and is exposed via a Service namedkube-dns. - Discovery: CoreDNS watches the Kubernetes API for new Services and EndpointSlices, dynamically generating DNS records.

- Client Config: The Kubelet configures every Pod’s

/etc/resolv.confto point at thekube-dnsService IP.

The Resolution Process

When a Pod queries a name like my-svc, the OS resolver iterates through the search domains defined in /etc/resolv.conf until it finds a match.

sequenceDiagram

participant App as Application

participant OS as OS Resolver (/etc/resolv.conf)

participant DNS as CoreDNS (kube-dns Service)

App->>OS: Resolve "my-svc"

Note over OS: iterate search domains

OS->>DNS: Query: my-svc.default.svc.cluster.local?

DNS-->>OS: A Record: 10.96.0.100 (Success)

OS-->>App: Return 10.96.0.100

Note over App,DNS: Scenario: External Domain (ndots:5)

App->>OS: Resolve "google.com"

OS->>DNS: Query: google.com.default.svc.cluster.local?

DNS-->>OS: NXDOMAIN

Note over OS: ... more internal retries ...

OS->>DNS: Query: google.com?

DNS-->>OS: A Record: 142.250.x.x

OS-->>App: Return IP

- Record Types:

- A Records: Resolve to a Service’s

ClusterIP(Standard) or multiple Pod IPs (Headless). - SRV Records: Created for named ports (e.g.,

_http._tcp.my-svc.ns.svc.cluster.local), allowing for dynamic port discovery. - CNAME Records: Used for

ExternalNameservices to point to external hostnames.

- A Records: Resolve to a Service’s

Performance & Scalability

As clusters grow, DNS can become a bottleneck or a source of latency.

- The “ndots:5” Trap: By default, if a name has fewer than 5 dots, Kubernetes tries internal search domains first. For external names like

api.github.com, this causes several failing internal queries (NXDOMAIN) before hititng the external resolver.- Pro Tip: Use a trailing dot (

google.com.) for external names to bypass the search path.

- Pro Tip: Use a trailing dot (

- NodeLocal DNSCache: Runs a DNS caching agent on every node as a DaemonSet. It drastically reduces latency and prevents conntrack exhaustion (UDP session tracking limits) in the Linux kernel during high DNS volume.

Debugging Kubernetes Networking

When network issues arise, follow a Bottom-Up troubleshooting flow, starting from the source Pod and moving up the abstraction layers.

flowchart TD

Start[Issue: Pod A cannot reach Service B] --> Net{1. Pod Networking OK?}

Net -- No --> FixNet[Check CNI / Routes / NetPol]

Net -- Yes --> DNS{2. DNS Resolution OK?}

DNS -- No --> FixDNS[Check CoreDNS / Config]

DNS -- Yes --> Svc{3. Service IP Reachable?}

Svc -- No --> FixSvc[Check kube-proxy / Spec]

Svc -- Yes --> EP{4. Endpoints Populated?}

EP -- No --> FixEP[Check Selectors / Readiness]

EP -- Yes --> App[5. Check Application Logs]

The Tool: Ephemeral Containers

Avoid installing debug tools in production images. Instead, use ephemeral containers to attach a “debug sidecar” (like netshoot) to a running Pod:

kubectl debug -it <pod-name> --image=nicolaka/netshoot

1. Pod Connectivity (The Foundation)

Verify the Pod can talk to the host and itself.

- Check IPs:

ip addr show(doeseth0matchkubectl get pod -o wide?) - Check Routes:

ip route show(is there a default gateway?) - Issue: If

eth0or routes are missing, the CNI plugin failed. Check CNI node logs (e.g.,calico-node,cilium-agent).

2. DNS (The Phonebook)

If the Pod has an IP, check if it can resolve names.

- Test Resolution:

nslookup my-service- NXDOMAIN: Name doesn’t exist (check namespace/spelling).

- Timeout: CoreDNS is unreachable (check CoreDNS pods and NetworkPolicies).

- Check Config:

cat /etc/resolv.conf(verify thenameserveris thekube-dnsService IP).

3. Services (The Virtual IP)

If DNS works, verify the Service and its endpoints.

- Test Connectivity:

nc -zv <service-ip> <port> - Check Endpoints:

kubectl get endpointslices -l kubernetes.io/service-name=<service-name> - Common Issue: Hairpin Traffic: A Pod failing to reach itself via its own Service IP. Ensure the Kubelet is running with

--hairpin-mode=hairpin-veth.

4. Packet Level (The Truth)

When logs aren’t enough, use tcpdump to see what’s on the wire.

- Capture:

tcpdump -i eth0 -w /tmp/capture.pcap - Analyze: Copy the file to your machine and open in Wireshark:

kubectl cp <pod-name>:/tmp/capture.pcap ./capture.pcap -c <debug-container-name>Look for TCP Retransmissions (network drops), RST (closed ports), or sent SYNs with no SYN-ACK (firewall/NetworkPolicy drops).

References

- Kubernetes Networking Series Part 1: The Model

- Kubernetes Networking Series Part 2: CNI & Pod Networking

- Kubernetes Networking Series Part 3: Services

- Kubernetes Networking Series Part 4: DNS

- Kubernetes Networking Series Part 5: Debugging

- The Kubernetes Network Model - Official Docs

Last updated: 2026-02-18

Kubernetes Storage: A Deep Dive

Storage in Kubernetes is designed to decouple the physical storage implementation from the application’s request for it. This allows for portable, infrastructure-agnostic deployments.

Stateless vs. Stateful Workloads

Understanding the nature of your workload is the first step in deciding how to handle storage:

- Stateless: Ephemeral, idempotent, and immutable. Containers can be replaced or rescheduled easily because they don’t store persistent state. Examples: Web servers, API gateways.

- Stateful: Requires durability and persistence. Data must survive Pod restarts, node failures, and upgrades. Examples: Databases (PostgreSQL, MongoDB), Message Brokers.

The Abstraction Stack

Kubernetes uses several layers to manage storage, moving from high-level requests to low-level implementation.

graph TD

PVC["PersistentVolumeClaim (PVC)"] -- requests --> SC["StorageClass"]

SC -- provisions --> PV["PersistentVolume (PV)"]

PV -- backed by --> Infra["Infrastructure Storage (EBS, Azure Disk, NFS)"]

Pod["Pod"] -- volumes --> PVC

Storage Lifecycle Flow

The complete path from developer intent to a running application with storage.

sequenceDiagram

participant User as Developer

participant K8s as K8s Control Plane

participant CSI_C as CSI Controller (Provisioner/Attacher)

participant Sched as K8s Scheduler

participant Kubelet as Node Kubelet (CSI Node Plugin)

User->>K8s: Create PVC

K8s->>CSI_C: Detect PVC (Provisioner)

CSI_C->>CSI_C: CreateVolume (CSI)

CSI_C-->>K8s: Create PV & Bind

User->>K8s: Create Pod

Sched->>K8s: Assign Pod to Node

K8s->>CSI_C: Trigger Attachment (Attacher)

CSI_C->>CSI_C: ControllerPublishVolume (CSI)

K8s->>Kubelet: Start Pod

Kubelet->>Kubelet: NodeStage & NodePublish (CSI)

Kubelet-->>User: Container Started with Volume

1. Persistent Volumes (PV)

A cluster-scoped resource representing actual storage. It has a lifecycle independent of any individual Pod that uses it.

- Phases:

Available→Bound→Released→Failed. - Reclaim Policies:

- Delete: Automatically deletes the underlying infrastructure when the PVC is deleted.

- Retain: Keeps the storage for manual cleanup (safer for production).

2. Persistent Volume Claims (PVC)

A namespace-scoped request for storage. It’s like a “voucher” that a Pod uses to get a PV.

- Binds: A PVC binds to a matching PV based on size and access modes.

- Access Modes:

ReadWriteOnce(RWO): One node can mount as read-write.- Why: Typically used for Block Storage (e.g., AWS EBS, Azure Disk). The filesystem is managed by the node’s kernel; concurrent access to the same raw block device from multiple nodes would lead to data corruption.

ReadOnlyMany(ROX): Many nodes can mount as read-only.- Why: Useful for sharing static data or assets (e.g., a shared web-server directory) across multiple Pods.

ReadWriteMany(RWX): Many nodes can mount as read-write.- Why: Requires File Storage (e.g., NFS, Azure Files, Amazon EFS). The storage backend handles file-level locking and concurrency, allowing multiple nodes to read/write safely.

3. StorageClasses

Policies for Dynamic Provisioning. Instead of manually creating PVs, an administrator defines a StorageClass. When a PVC request comes in, the cluster creates a PV on the fly.

- Binding Modes:

Immediate: Create volume as soon as PVC is created.WaitForFirstConsumer: Delay creation until the Pod is scheduled (best for multi-zone clusters).

Container Storage Interface (CSI)

The CSI moved storage drivers “out-of-tree,” allowing storage vendors to develop plugins independently of the Kubernetes core.

sequenceDiagram

participant K8s as K8s API Server

participant ExtP as External Provisioner

participant ExtA as External Attacher

participant CSID as CSI Driver (Controller/Node)

participant Kube as Kubelet

K8s->>ExtP: Watch: New PVC

ExtP->>CSID: CreateVolume (gRPC)

Note over CSID: Provision Backend Disk

ExtP-->>K8s: Create PersistentVolume (PV)

K8s->>ExtA: Watch: Pod scheduled to Node

ExtA->>CSID: ControllerPublishVolume (gRPC)

Note over CSID: Attach Disk to VM/Host

K8s->>Kube: Pod assigned to local node

Kube->>CSID: NodeStageVolume (gRPC)

Note over CSID: Format & Prep Global Mount

Kube->>CSID: NodePublishVolume (gRPC)

Note over CSID: Bind Mount into Pod Directory

- Controller Plugin: Handles cluster-wide tasks like provisioning and attaching.

- Node Plugin: Runs on every node to handle mounting (

NodeStage/NodePublish).

StatefulSets & Storage

StatefulSets are uniquely designed for applications requiring stable identities and storage.

- volumeClaimTemplates: Creates a unique PVC for each Pod ordinal (e.g.,

db-0,db-1). - Stable Identity: If

db-0crashes and is rescheduled, it will re-attach to the same PVC it had before. - PVC Retention Policy: (K8s 1.27+) Control if PVCs are deleted when a StatefulSet is scaled down.

Troubleshooting Guide (At a Glance)

When storage issues arise, use these specific flows to pinpoint the failure.

Case 1: PVC is stuck in Pending

This usually happens during the Provisioning phase.

flowchart TD

Start[PVC stuck in Pending] --> SC{Default StorageClass?}

SC -- No --> SetSC[Specify SC or set default]

SC -- Yes --> Match{Matching PV?}

Match -- Yes --> Bind[Wait for Binding]

Match -- No --> Dynamic{SC allow dynamic?}

Dynamic -- No --> CreatePV[Static Provisioning Required]

Dynamic -- Yes --> FirstConsumer{"WaitForFirstConsumer?"}

FirstConsumer -- Yes --> SchedulePod["Schedule Pod to Node first"]

FirstConsumer -- No --> Events["Check describe PVC Events: Quota, Permissions"]

Case 2: Pod is stuck in ContainerCreating

This occurs during the Attachment or Mounting phases.

flowchart TD

Start[Pod in ContainerCreating] --> Attached{Volume Attached?}

Attached -- No --> MultiAttach{Multi-Attach Error?}

MultiAttach -- Yes --> Detach[Force Detach or wait for Old Node]

MultiAttach -- No --> CSIController[Check CSI Controller Logs]

Attached -- Yes --> Mounted{Node Mounted?}

Mounted -- No --> CSINode[Check CSI Node Plugin Logs]

Mounted -- Yes --> SecretConfig{ConfigMap/Secret present?}

SecretConfig -- No --> CreateResources[Create missing resources]

SecretConfig -- Yes --> Permissions[Check SecurityContext & fsGroup]

Case 3: PVC is stuck in Terminating

This happens when you try to delete a volume that is still in use.

flowchart TD

Start[PVC stuck in Terminating] --> Clean[Check for Pod consumers]

Clean --> Finalizer{Finalizer: pvc-protection?}

Finalizer -- Yes --> RunningPod{"Healthy Pod using it?"}

RunningPod -- Yes --> DeletePod["Delete Pod first"]

RunningPod -- No --> Zombie["Check Node for zombie mount"]

Zombie -- Yes --> Unmount["Force Unmount from Node"]

Zombie -- No --> Force["Remove Finalizer - AS LAST RESORT"]

Summary of Debug Commands

| Failure Layer | Primary Command | Search For | | :— | :— | :— | | PVC | kubectl describe pvc <name> | Events section for provisioner errors. | | CSI Control | kubectl logs csi-provisioner-... | gRPC CreateVolume failures. | | Attachment | kubectl get volumeattachment | isAttached: true and attached: false. | | Node/Mount | kubectl describe pod <name> | FailedMount or FailedAttach events. | | Permissions | kubectl exec -it <pod> -- ls -l | Owner UID/GID of the mount point. |

References

Last updated: 2026-02-28

Concepts

Cloud Native: Kubernetes + GPU

GPU Infrastructure & Scheduling

NVIDIA GPU Operator

The NVIDIA GPU Operator automates the management of all NVIDIA software components needed to provision GPUs in Kubernetes.

Core Components (Operands)

- NVIDIA Driver: Low-level kernel drivers (can be containerized).

- NVIDIA Container Toolkit: Configures container runtimes (containerd/CRI-O) to mount GPU resources.

- NVIDIA Device Plugin: Traditional mechanism for exposing GPUs as extended resources (

nvidia.com/gpu). - GPU Feature Discovery (GFD): Labels nodes with GPU attributes (model, memory, capabilities).

- DCGM Exporter: Exports GPU telemetry (utilization, power, temperature) for Prometheus.

- MIG Manager: Manages Multi-Instance GPU (MIG) partitioning.

Common Configuration (Helm)

helm install gpu-operator nvidia/gpu-operator \

--set driver.enabled=true \

--set toolkit.enabled=true \

--set psp.enabled=false

Resource Allocation: CDI & DRA

CDI (Container Device Interface)

Standardizes how third-party devices are made available to containers, replacing runtime-specific hooks with a declarative JSON descriptor.

DRA (Dynamic Resource Allocation)

Next-generation resource management API (K8s v1.26+) moving beyond Device Plugins.

ResourceClaim: A request for specific hardware (like PVC).- Rich Filtering: Use CEL (Common Expression Language) to request specific attributes (e.g.,

device.memory >= 80Gi).

GPU Sharing Strategies

Maximize utilization by sharing physical GPUs across multiple workloads.

| Technology | Use Case | Isolation | Memory Sharing |

|---|---|---|---|

| MIG | Multi-tenant, inference | Hardware (Full) | No (partitioned) |

| vGPU | VMs, legacy apps | Hardware | No (allocated) |

| Time-slicing | Dev/test, burstable | None (Software) | Yes (shared) |

| MPS | CUDA streams | Partial | Yes |

NVIDIA MIG (Multi-Instance GPU)

Partitions A100/H100 GPUs into smaller instances with dedicated resources.

1g.10gb- 1/7 GPU, 10GB memory2g.20gb- 2/7 GPU, 20GB memory3g.40gb- 3/7 GPU, 40GB memory

Time-Slicing Config

sharing:

timeSlicing:

replicas: 4

Last updated: 2026-03-07

GPU Monitoring with NVIDIA DCGM

Data Center GPU Manager (DCGM) is the industry standard for monitoring and managing NVIDIA GPUs in cluster environments.

DCGM Key Metrics

DCGM provides a wide range of metrics, classified into health, usage, and profiling categories.

| Metric | DCGM Field Name | Description |

|---|---|---|

| GPU Utilization | DCGM_FI_DEV_GPU_UTIL | Traditional activity percentage (see MIG section below) |

| Memory Used | DCGM_FI_DEV_FB_USED | Amount of frame buffer memory used |

| Temperature | DCGM_FI_DEV_GPU_TEMP | Core temperature in degrees Celsius |

| Power Usage | DCGM_FI_DEV_POWER_USAGE | Instantaneous power draw in Watts |

| PCIE Throughput | DCGM_FI_PROF_PCIE_TX_BYTES | Data transferred over PCIe bus |

Monitoring MIG (Multi-Instance GPU)

When using MIG (A100/H100), traditional utilization metrics like GPU_UTIL often fail or report incorrectly at the partition level.

GPU_UTIL vs GR_ENGINE_ACTIVE

[!IMPORTANT] For MIG partitions, always use

DCGM_FI_PROF_GR_ENGINE_ACTIVEinstead ofDCGM_FI_DEV_GPU_UTIL.

GPU_UTIL(DCGM_FI_DEV_GPU_UTIL): Reports if any kernel is executing. It doesn’t accurately reflect resource consumption within a MIG slice.GR_ENGINE_ACTIVE(DCGM_FI_PROF_GR_ENGINE_ACTIVE): Measures the Graphics Engine activity. This provides a more precise utilization value for both graphics and compute workloads and is fully supported on individual MIG instances.

Other Profiling Metrics for MIG

DCGM_FI_PROF_SM_ACTIVE: SM (Streaming Multiprocessor) activity.DCGM_FI_PROF_SM_OCCUPANCY: Ratio of active warps to maximum warps.DCGM_FI_PROF_PIPE_TENSOR_ACTIVE: Utilization of Tensor Cores (critical for LLM/AI).

Kubernetes Integration

In Kubernetes, monitoring is typically handled by dcgm-exporter.

Deployment with Helm

helm repo add nvidia https://helm.ngc.nvidia.com/nvidia

helm repo update

helm install dcgm-exporter nvidia/dcgm-exporter \

--namespace gpu-operator \

--set arguments={-f,/etc/dcgm-exporter/default-counters.csv}

Scraping with Prometheus

dcgm-exporter exposes a /metrics endpoint. In Kubernetes, use a ServiceMonitor:

apiVersion: monitoring.coreos.com/v1

kind: ServiceMonitor

metadata:

name: dcgm-exporter

spec:

selector:

matchLabels:

app.kubernetes.io/name: dcgm-exporter

endpoints:

- port: metrics

interval: 15s

MIG Pod Metrics

When dcgm-exporter runs, it automatically appends Kubernetes metadata (pod name, namespace, container name) to the GPU metrics. For MIG, it uses the GPU-L0 (or similar) device identifier to map specific partitions to the pods consuming them.

Last updated: 2026-02-18

GPU Monitoring & Metrics

NVIDIA DCGM (Data Center GPU Manager)

The industry standard for managing and monitoring NVIDIA GPUs in clusters.

Key Metrics Reference

| Metric | Field Name | Description |

|---|---|---|

| Compute Util | DCGM_FI_DEV_GPU_UTIL | Traditional activity % |

| GR Engine | DCGM_FI_PROF_GR_ENGINE_ACTIVE | Use for MIG partitions |

| Memory Used | DCGM_FI_DEV_FB_USED | FB memory usage |

| PCIe Bandwidth | DCGM_FI_PROF_PCIE_RX_BYTES | Bytes received over PCIe |

| Power Usage | DCGM_FI_DEV_POWER_USAGE | Instantaneous draw in Watts |

Monitoring MIG Instances

[!IMPORTANT] For MIG partitions, always use

GR_ENGINE_ACTIVEinstead ofGPU_UTIL. Traditional utilization metrics often report incorrectly at the partition level.

Advanced Profiling Metrics

DCGM_FI_PROF_SM_ACTIVE: SM (Streaming Multiprocessor) activity.DCGM_FI_PROF_PIPE_TENSOR_ACTIVE: Tensor Core utilization (Critical for LLMs).

Kubernetes Integration

Deployment (dcgm-exporter)

Runs as a DaemonSet to expose metrics to Prometheus. It automatically appends Pod/Container metadata to the metrics.

# Prometheus ServiceMonitor

endpoints:

- port: metrics

interval: 15s

Last updated: 2026-03-07

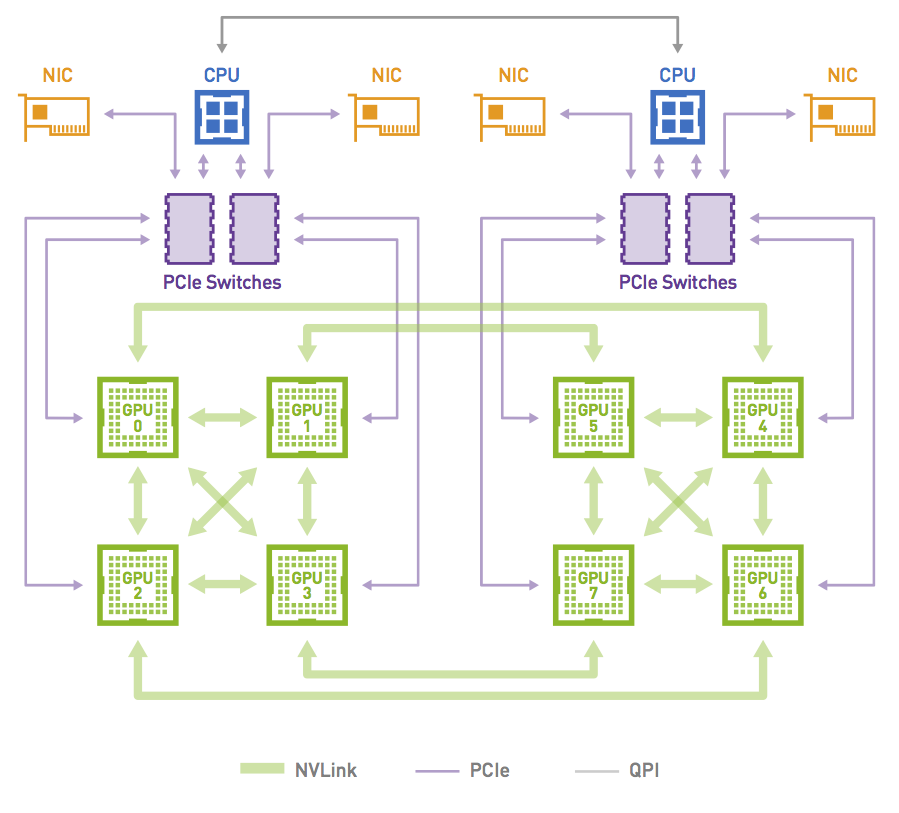

GPU Performance: Data Movement & Bottlenecks

Understanding how data flows through a system is critical for identifying why a GPU might be underutilized.

How Data Moves

The journey of data from storage to the GPU execution unit involves multiple hops, each a potential bottleneck.

1. Storage to CPU RAM

Data is loaded from disk (SSD, Parallel Filesystem like Lustre/WEKA) into Host Memory (RAM).

- Bottleneck: I/O throughput of the storage system or network (if using remote storage).

2. CPU RAM to GPU VRAM (The PCIe Pipe)

The CPU orchestrates the transfer of data from RAM to the GPU’s onboard memory (VRAM) via the PCIe bus.

- Bottleneck: PCIe bandwidth. Even PCIe Gen 5 (64GB/s x16) is significantly slower than GPU VRAM bandwidth (>2TB/s on H100).

- Optimization: Use GPUDirect Storage (GDS) to bypass the CPU and move data directly from storage/NIC to GPU memory.

3. GPU to GPU (NVLink)

In multi-GPU setups, gradients and data are exchanged between GPUs.

- Bottleneck: PCIe is often too slow for this. NVLink provides a dedicated, high-speed interconnect (up to 900GB/s on H100) that allows GPUs to talk directly without involving the CPU.

Debugging Bottlenecks with DCGM

To identify where the “stall” is happening, monitor specific DCGM metrics and follow these decision paths.

Identifying the Bottleneck

graph TD

Start[GPU-Util shows 80% but job is slow] --> DCGM{DCGM profiling metrics available?}

DCGM -- Yes (Datacenter GPU) --> SM_Active{Check SM Active}

DCGM -- No (Consumer GPU) --> SMI[Use nvidia-smi signals: Temp + Clock + Memory-Util]

SM_Active -- "High > 70%" --> DRAM_Active{Check DRAM Active}

SM_Active -- "Low < 30%" --> Transfers[Check PCIe/NVLink throughput: PCIE_RX_BYTES, PCIE_TX_BYTES]

SM_Active -- "30-70%" --> Mixed[Mixed signals: Check temp + clock + transfers]

DRAM_Active -- "High > 70%" --> MemBound[Memory-bound workload: Consider smaller batches]

DRAM_Active -- Low --> Tensor{Check Tensor Pipeline}

Tensor -- "High > 70%" --> ComputeBound[Compute-bound: Hitting fast path]

Tensor -- Low --> NoTensor[Not using tensor cores: Check FP16/BF16 settings]

SMI --> SMI_Heuristic{High GPU-Util + High Temp + High Clock?}

SMI_Heuristic -- Yes --> LikelyCompute[Likely compute-bound]

SMI_Heuristic -- No --> LikelyStalled[Likely stalled/waiting: Check memory utilization]

style MemBound fill:#f96,stroke:#333

style ComputeBound fill:#9f9,stroke:#333

style LikelyCompute fill:#9f9,stroke:#333

style LikelyStalled fill:#f96,stroke:#333

style Transfers fill:#f96,stroke:#333

Workload Specific Flowcharts

1. Training (Steady, long-running)

graph TD

T_Start{SM Active sustained over time?}

T_Start -- Yes --> T_DRAM{DRAM Active matches model expectations?}

T_Start -- "No (but GPU-Util high)" --> T_RedFlag[Red flag: GPU-Util high but SMs idle]

T_DRAM -- Yes --> T_Phys{Power, temp, clocks stable?}

T_DRAM -- No --> T_MemAccess[Check memory access patterns: Possible underutilization]

T_Phys -- Yes --> T_Healthy[Healthy training: Sustained throughput confirmed]

T_Phys -- No --> T_Throttling[Thermal or power throttling: Throughput dropping]

T_RedFlag --> T_Bottleneck[Stalls or waits, not real compute]

T_Bottleneck --> T_IO[Check transfer metrics: Data pipeline bottleneck?]

T_Bottleneck --> T_Sync[Check sync patterns: Gradient sync overhead?]

style T_Healthy fill:#9f9,stroke:#333

style T_Throttling fill:#f96,stroke:#333

style T_RedFlag fill:#f66,stroke:#333

2. Inference (Bursty, latency-sensitive)

graph TD

I_Start{SM Active high during request bursts?}

I_Start -- Yes --> I_Mem{Memory pressure spikes as expected?}

I_Start -- No --> I_Clock{Clocks ramping up when requests arrive?}

I_Mem -- Yes --> I_Tail{Tail latency P95/P99 acceptable?}

I_Mem -- No --> I_Compute[Not memory-bound during bursts: Check compute patterns]

I_Tail -- Yes --> I_Healthy[Healthy inference: GPU active when needed]

I_Tail -- No --> I_Queue[Check queuing, preprocessing or post-processing]

I_Clock -- Yes --> I_Pipeline[Input data not ready: Check data pipeline]

I_Clock -- No --> I_Power[Clock ramp-up delay or power management issue]

style I_Healthy fill:#9f9,stroke:#333

style I_Pipeline fill:#f96,stroke:#333

style I_Power fill:#f96,stroke:#333

Summary of Data Travel Paths

graph TD

Paths[Three paths data travels]

Paths --> P1[Host -> GPU: PCIe 16-32 GB/s]

Paths --> P2[GPU -> GPU: NVLink 300-900 GB/s]

Paths --> P3[GPU Memory -> SMs: HBM ~2 TB/s]

SM{SM Active?}

SM -- High --> C_Bound[Compute-bound: SMs busy]

SM -- Low --> Interconnect{PCIe/NVLink traffic high?}

Interconnect -- Yes --> T_Bottleneck[Transfer bottleneck: Waiting for data]

Interconnect -- No --> D_Active{DRAM Active high?}

D_Active -- Yes --> M_Bound[Memory-bound: GPU memory is the limiter]

D_Active -- No --> S_Check[Check kernel launches, sync or scheduling]

style C_Bound fill:#9f9,stroke:#333

style T_Bottleneck fill:#f96,stroke:#333

style M_Bound fill:#f96,stroke:#333

| Metric | Focus | Insight |

|---|---|---|

DCGM_FI_PROF_PCIE_TX_BYTES | PCIe Outbound | High values indicate heavy data transfer from GPU to Host. |

DCGM_FI_PROF_PCIE_RX_BYTES | PCIe Inbound | High values indicate the CPU is feeding the GPU at the bus limit. |

DCGM_FI_DEV_MEM_COPY_UTIL | Memory Controller | Percentage of time spent moving data in/out of VRAM. |

DCGM_FI_DEV_GPU_UTIL | Compute Engine | If this is low while PCIE_RX is high, the GPU is Data Starved. |

Interpreting Graphs

[!TIP] The “Data Stall” Pattern: You see low

GPU_UTIL(e.g., 20-30%) butPCIE_RX_BYTESis pegged at the theoretical maximum of your PCIe generation. This confirms the bottleneck is the PCIe bus.

[!IMPORTANT] MIG Bottlenecks: When using MIG, remember that the PCIe bandwidth is shared across all instances on the physical GPU. One aggressive instance can starve others.

Performance Checklist

- Check PCIe Link Speed: Ensure the GPU is actually negotiated at its maximum rated speed (e.g., x16 Gen4).

- Monitor NVLink Error Rates: Use

nvidia-smi nvlink -g 0to check for CRC errors which might indicate faulty hardware slowing down transfers. - CPU Affinity: Ensure the process is pinned to the CPU socket physically closest to the GPU to minimize PCIe latency.

Last updated: 2026-03-07

GPU Sharing in Kubernetes

Overview of GPU sharing technologies for maximizing GPU utilization in Kubernetes clusters.

Technologies Comparison

| Technology | Use Case | Isolation | Memory Sharing |

|---|---|---|---|

| MIG | Multi-tenant, inference | Hardware | No (partitioned) |

| vGPU | VMs, legacy apps | Full | No (allocated) |

| Time-slicing | Dev/test, burstable | None | Yes (shared) |

| MPS | CUDA streams | Partial | Yes |

NVIDIA MIG (Multi-Instance GPU)

MIG partitions A100/H100 GPUs into smaller instances with dedicated resources.

Supported Profiles (A100 80GB)

1g.10gb- 1/7 GPU, 10GB memory2g.20gb- 2/7 GPU, 20GB memory3g.40gb- 3/7 GPU, 40GB memory7g.80gb- Full GPU

Configuration

# Enable MIG mode

nvidia-smi -i 0 -mig 1

# Create MIG instances

nvidia-smi mig -cgi 9,9,9,9,9,9,9 -i 0

# List instances

nvidia-smi mig -lgi

Time-Slicing

Share a single GPU across multiple pods with time-based multiplexing.

ConfigMap Example

apiVersion: v1

kind: ConfigMap

metadata:

name: time-slicing-config

data:

any: |-

version: v1

sharing:

timeSlicing:

replicas: 4

Last updated: 2026-02-09

GPU Operator, CDI, and DRA

Modern Kubernetes infrastructure for managing accelerator lifecycle, standardizing device access, and dynamic resource management.

NVIDIA GPU Operator

The NVIDIA GPU Operator automates the management of all NVIDIA software components needed to provision GPUs in Kubernetes.

flowchart TD

Operator["NVIDIA GPU OPERATOR"]

NFD["NFD"]

subgraph GPUNode ["GPU Node"]

Drivers["NVIDIA Drivers"]

DevicePlugin["Device Plugin"]

Toolkit["Container Toolkit"]

DCGM["DCGM"]

end

Operator -.-> NFD

Operator -.-> Drivers

Operator -.-> DevicePlugin

Operator -.-> Toolkit

Operator -.-> DCGM

classDef operator fill:#3b82f6,color:#fff,stroke:#2563eb,stroke-width:2px

classDef nfd fill:#fff,stroke:#ef4444,color:#ef4444,stroke-width:2px,rx:10,ry:10

classDef drivers fill:#eff6ff,stroke:#3b82f6,color:#3b82f6,stroke-width:2px,rx:10,ry:10

classDef plugin fill:#fefce8,stroke:#ca8a04,color:#ca8a04,stroke-width:2px,rx:10,ry:10

classDef toolkit fill:#f0fdf4,stroke:#16a34a,color:#16a34a,stroke-width:2px,rx:10,ry:10

classDef dcgm fill:#faf5ff,stroke:#9333ea,color:#9333ea,stroke-width:2px,rx:10,ry:10

classDef node fill:#fdfbf7,stroke:#333,stroke-width:1px

class Operator operator

class NFD nfd

class Drivers drivers

class DevicePlugin plugin

class Toolkit toolkit

class DCGM dcgm

class GPUNode node

Every available GPU nodes will be configured with required components and configurations

Core Components (Operands)

- NVIDIA Driver: Low-level kernel drivers (can be containerized).

- NVIDIA Container Toolkit: Configures container runtimes (containerd/CRI-O) to mount GPU resources.

- NVIDIA Device Plugin: Traditional mechanism for exposing GPUs as extended resources (

nvidia.com/gpu). - GPU Feature Discovery (GFD): Labels nodes with GPU attributes (model, memory, capabilities).

- DCGM Exporter: Exports GPU telemetry (utilization, power, temperature) for Prometheus.

- MIG Manager: Manages Multi-Instance GPU (MIG) partitioning.

Common Configuration (Helm)

helm install gpu-operator nvidia/gpu-operator \

--set driver.enabled=true \

--set toolkit.enabled=true \

--set psp.enabled=false

CDI (Container Device Interface)

CDI is an open specification for container runtimes (containerd, CRI-O) to standardize how third-party devices are made available to containers.

- Standardization: Replaces runtime-specific hooks with a declarative JSON descriptor.

- Mechanism: The device plugin returns a fully qualified device name (e.g.,

nvidia.com/gpu=0), and the runtime uses the CDI spec to inject device nodes, environment variables, and mounts. - Benefits: Simplifies the path from device plugin to low-level runtime (runc), moving complex logic out of the runtime itself.

DRA (Dynamic Resource Allocation)

DRA is the next-generation resource management API in Kubernetes (introduced in v1.26, evolving in v1.31+), moving beyond the limitations of the Device Plugin API.

Key Concepts

ResourceClaim: A request for specific hardware resources (similar to PVC for storage).DeviceClass: Defines categories of devices (e.g., “high-memory-gpus”) with specific filters.ResourceSlice: Represents the actual hardware availability on nodes.

Benefits over Device Plugins

- Rich Filtering: Use CEL (Common Expression Language) to request specific attributes (e.g.,

device.memory >= 24Gi). - Device Sharing: Better native support for sharing devices across multiple containers/pods.

- Hardware Topology: Improved awareness of PCIe/NVLink topologies for multi-GPU workloads.

- Decoupled Lifecycle: Allocation happens during scheduling, allowing for more complex “all-or-nothing” scheduling for multi-node jobs.

Example Claim

apiVersion: resource.k8s.io/v1alpha3

kind: ResourceClaim

metadata:

name: gpu-claim

spec:

devices:

requests:

- name: my-gpu

deviceClassName: nvidia-h100

selectors:

- cel: "device.memory >= 80Gi"

Last updated: 2026-03-02

GPU Performance & Troubleshooting

Identifying Bottlenecks

Follow these decision paths to find out why your workload is slow.

graph TD

Start[GPU-Util shows 80% but job is slow] --> DCGM{DCGM profiling metrics available?}

DCGM -- Yes (Datacenter GPU) --> SM_Active{Check SM Active}

DCGM -- No (Consumer GPU) --> SMI[Use nvidia-smi signals: Temp + Clock + Memory-Util]

SM_Active -- "High > 70%" --> DRAM_Active{Check DRAM Active}

SM_Active -- "Low < 30%" --> Transfers[Check PCIe/NVLink throughput: PCIE_RX_BYTES, PCIE_TX_BYTES]

DRAM_Active -- "High > 70%" --> MemBound[Memory-bound workload]

DRAM_Active -- Low --> Tensor{Check Tensor Pipeline}

Tensor -- High --> ComputeBound[Compute-bound]

Tensor -- Low --> NoTensor[Not using tensor cores]

style MemBound fill:#f96,stroke:#333

style ComputeBound fill:#9f9,stroke:#333

style Transfers fill:#f96,stroke:#333

(See detailed training/inference flowcharts in the full note content)

Hardware Faults & XIDs

XID errors are reports from the NVIDIA driver indicating hardware or driver-level failures.

Common XID Codes

- XID 31 (Page Fault): Invalid memory access. Software or faulty HW.

- XID 61 (Internal Error): Firmware error, usually requires reboot.

- XID 79 (Falling off the Bus): GPU is unresponsive. PCIe link issue.

ECC Errors

- Single-Bit (SBE): Automatically corrected.

- Double-Bit (DBE): Uncorrectable. Crashes application to prevent corruption. Requires GPU reset.

Diagnostic Checklist

- PCIe Link Speed: Verify

x16 Gen4/5negotiation. - Thermal Throttling: Check if Clocks drop under load.

- CPU Affinity: Ensure Pod is on the same NUMA node as the GPU.

Last updated: 2026-03-07

Kubernetes Device Plugins

By default, Kubernetes has no idea what a GPU is. It only understands resources like CPU and memory. To make Kubernetes aware of GPUs, you need the Device Plugin framework.

It is basically a set of APIs that allows third-party hardware vendors like NVIDIA, AMD to create plugins that advertise specialized hardware (like GPUs or other accelerators) to the Kubernetes scheduler.

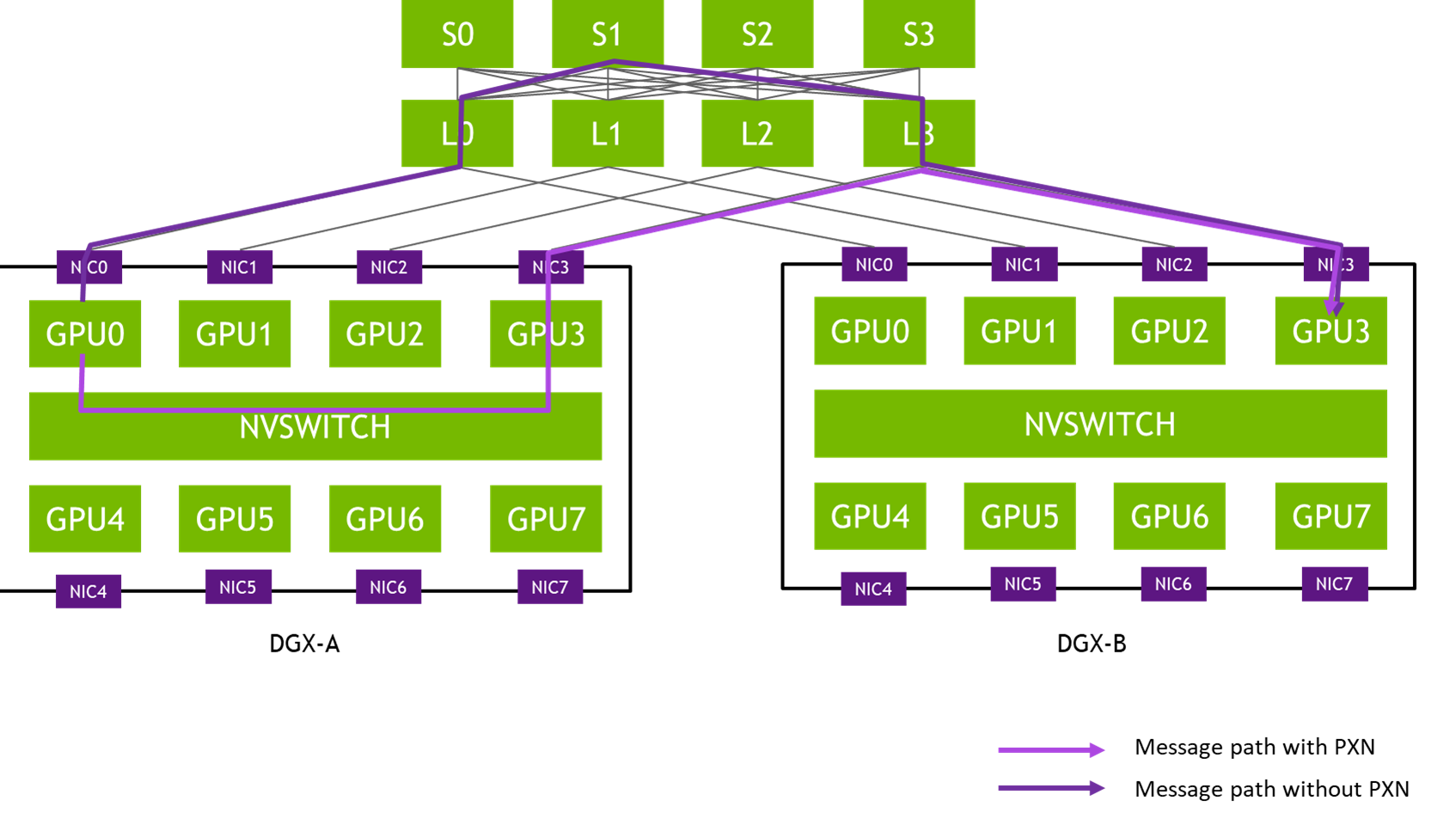

The following diagram illustrates what happens when you install a Device Plugin on a GPU Node.

Here is how it works:

Device plugins run on specific GPU nodes as DaemonSets. They register with the kubelet and communicate via gRPC.

They let nodes show their GPU hardware, like NVIDIA or AMD, to the kubelet.

The kubelet shares this information with the API server, so the scheduler knows which nodes have GPUs.

Scheduling Pods With GPU

Once the device plugin is set up, you can request a GPU in your Pod spec, like this:

resources:

limits:

nvidia.com/gpu: 1

Once you deploy the pod spec, the scheduler sees your GPU request and finds a node with available NVIDIA GPUs. The pod gets scheduled to that node.

Once scheduled, the kubelet invokes the device plugin’s Allocate() method to reserve a specific GPU. The plugin then provides the necessary details like the GPU device ID. Using this information, the kubelet launches your container with the appropriate GPU configurations.

The following image illustrates the detailed flow of an NVIDIA device plugin:

flowchart LR

subgraph ControlPlane[" "]

direction TB

APIServer["API Server"]

Scheduler["Scheduler"]

APIServer --> Scheduler

end

subgraph GPUNode["Worker Node (NVIDIA GPU)"]

direction TB

KUBELET["kubelet"]

PLUGIN["NVIDIA Device Plugin<br>(DaemonSet)"]

PODS["App<br>Pods"]

GPUS["GPUs"]

PLUGIN -. Register .-> KUBELET

PLUGIN <-->|gRPC| KUBELET

KUBELET -- Request --> PLUGIN

PLUGIN -- Allocate --> KUBELET

KUBELET --> PODS

PODS -. "Acess<br>GPUs" .-> GPUS

end

Scheduler -- "Create<br>Pod" --> KUBELET

KUBELET -. "Update<br>Node Resources<br>(GPU)" .-> APIServer

classDef bg fill:#f9fafb,stroke:#e5e7eb,stroke-width:1px

classDef kubelet fill:#add8e6,stroke:#000

classDef plugin fill:#90ee90,stroke:#000

classDef sched fill:#d8bfd8,stroke:#000

classDef pod fill:#ffe4b5,stroke:#000

class ControlPlane,GPUNode bg

class KUBELET kubelet

class PLUGIN plugin

class Scheduler sched

class PODS,GPUS pod

Concepts

Cloud Native: Observability

Kubernetes observability is the process of collecting and analyzing metrics, logs, and traces (the “three pillars of observability”) to understand the internal state, performance, and health of a cluster.

1. Prometheus Architecture

Prometheus is an open-source systems monitoring and alerting toolkit. It is designed for reliability and is the industry standard for cloud-native observability.

Core Components

- Prometheus Server: Scrapes metrics from instrumented jobs, stores them in a local TSDB, and runs rules over the data.

- Service Discovery: Automatically identifies targets in dynamic environments (like Kubernetes).

- Pushgateway: Supports short-lived jobs that cannot be scraped via the pull model.

- Alertmanager: Handles alerts sent by the Prometheus server, deduplicating, grouping, and routing them to notification providers.

- PromQL: A powerful functional query language designed for time series data.

2. Node Exporter Deep Dive

Node Exporter is the standard agent for harvesting hardware and OS metrics from *NIX kernels. It is designed to be stateless and lightweight.

The Flow of Metrics

Node Exporter doesn’t store data. When Prometheus initiates a scrape, Node Exporter reads the current values from the Linux kernel’s virtual filesystems (/proc and /sys) and converts them into the Prometheus Exposition Format.

Internal Mechanics

- Collectors: Specialized modules (e.g.,

cpu,meminfo,diskstats) that delegate gathering specific metrics. - Textfile Collector: Allows exporting custom metrics from static files, useful for batch jobs or hardware RAID status.

- No Reliance on Syscalls: Whenever possible, it reads from

/procto avoid the overhead of context switches from system calls.

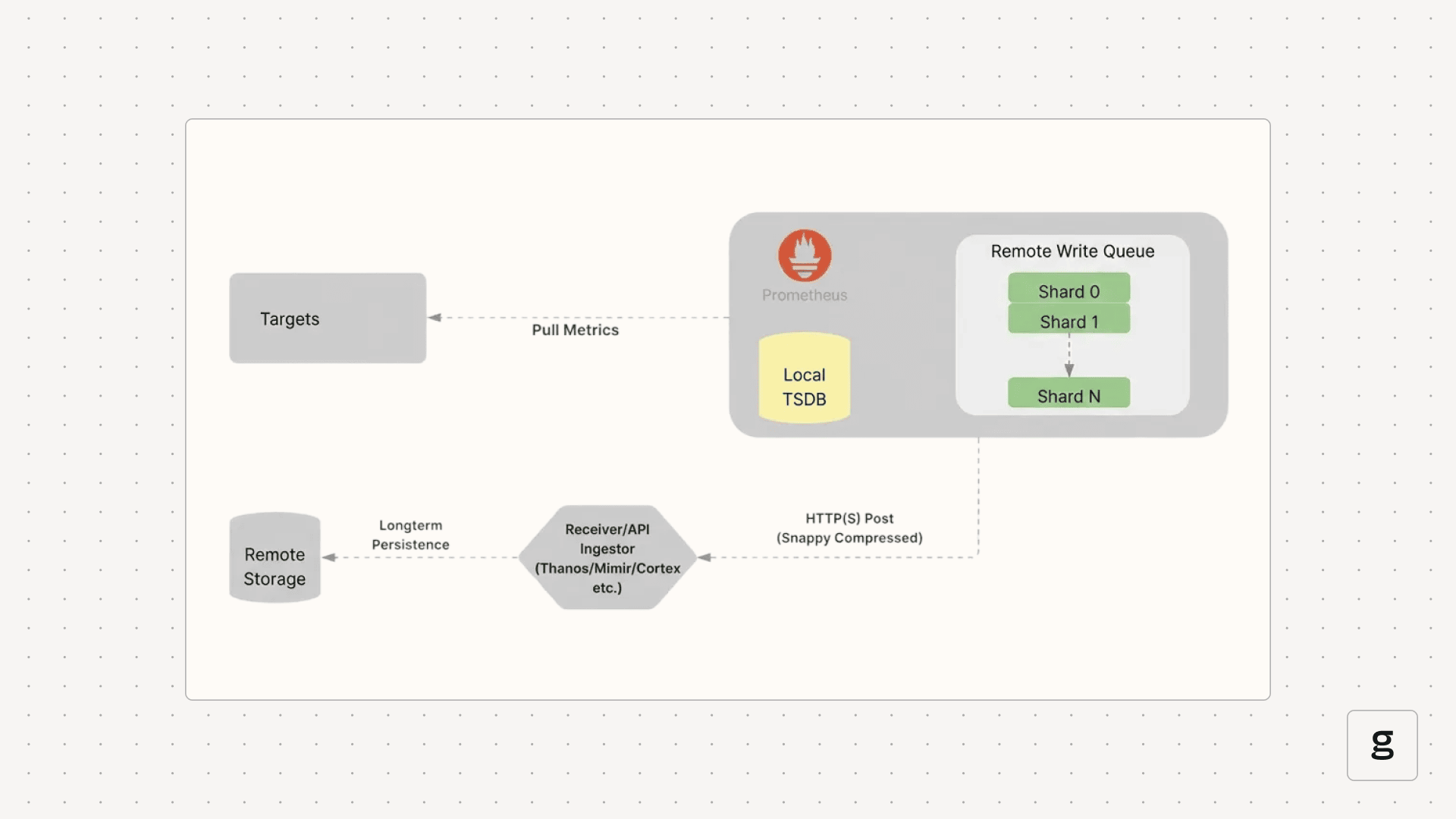

3. Remote Write & Scalability

Prometheus Remote Write allows shipping time series samples to a remote storage backend immediately after they are scraped and written to the local TSDB.

Why Remote Write?

- Long-Term Storage: Local Prometheus TSDBs are typically optimized for short-term retention (e.g., 15 days). Remote Write enables archiving years of data in cloud storage.

- Global View: Consolidate metrics from multiple clusters into a single centralized hub (e.g., Grafana pointing to a central Cortex/Mimir instance).

- High Availability: Feed data into distributed systems built for resilience.

Mechanism: Sharding & Queues

To handle high throughput, Remote Write uses an in-memory queue managed by concurrent shards (worker threads).

- Data Ordering: Samples for the same unique time series are always routed to the same shard to ensure correct ingestion order.

- Retry Logic: Shards implement exponential backoff to handle transient network issues or remote endpoint errors.

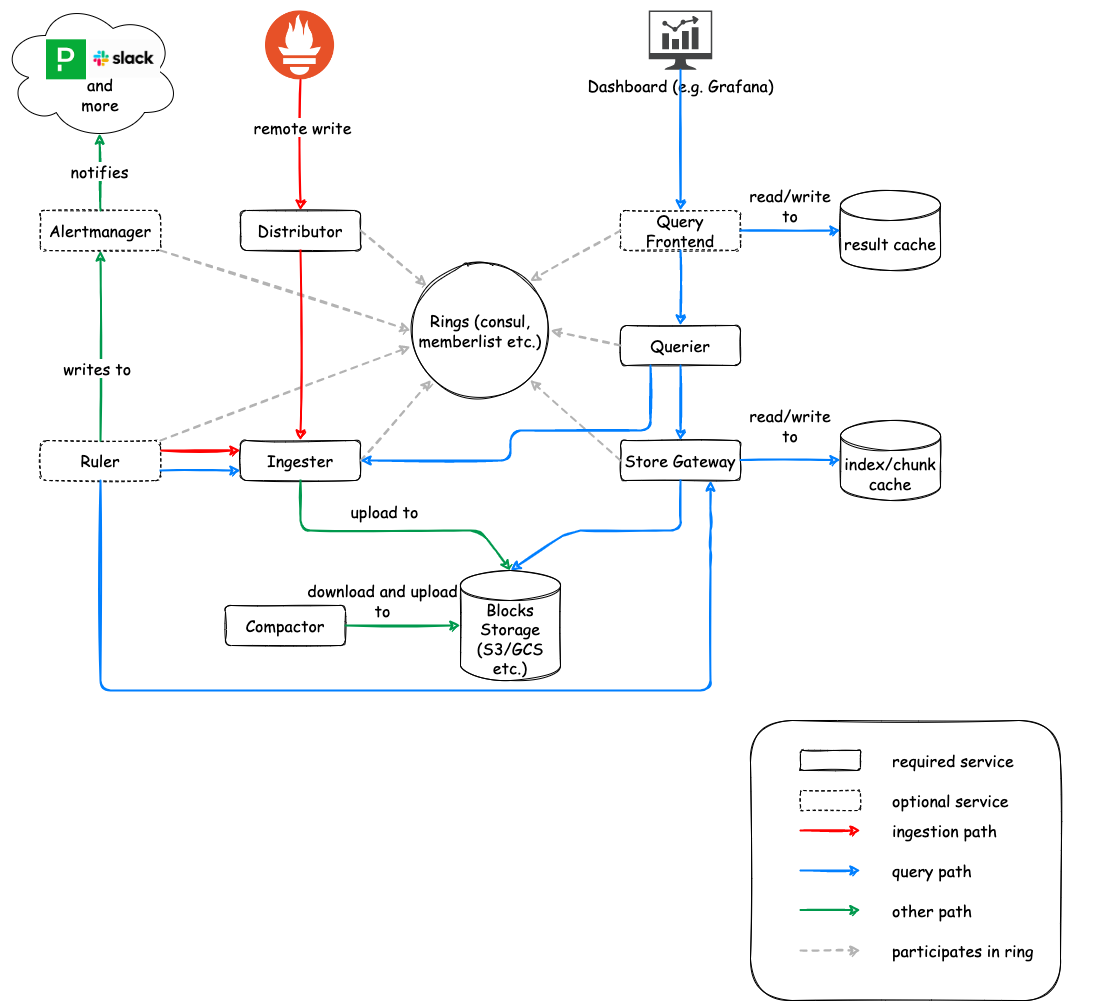

4. Federated Observability: Cortex

Cortex is a horizontally scalable, highly available, multi-tenant, long-term storage for Prometheus. It is built as a set of microservices.

Key Microservices

- Distributor: Handles incoming samples.

- Consistent Hashing: Uses a “hash ring” to route data to the correct Ingesters.

- HA Tracker: Deduplicates samples from redundant Prometheus pairs by tracking leader status via

clusterandreplicalabels. - Quorum Writes: Ensures durability by waiting for a majority of Ingesters to acknowledge the write.

- Ingester: Statefully caches incoming samples in memory.

- WAL (Write Ahead Log): Records data before caching to prevent loss during crashes.

- Chunking: Flushes data blocks to long-term storage (S3, GCS, Azure Blob) once they reach a certain size or age.

- Querier: Executes PromQL queries by fetching data from both Ingesters (for recent data) and long-term storage (via Store Gateway).

Summary: The Metrics Pipeline

- Kubernetes Components: Emit metrics via

/metrics(e.g., Kubelet, API Server). - Enrichment:

kube-state-metricsadds context about object status. - Logs: Nodes use agents like Fluent Bit to forward logs to central stores (e.g., Loki).

- Traces: OpenTelemetry (OTLP) standardized spans are processed via OTel Collectors and stored in backends like Tempo or Jaeger.

References:

Concepts

AI Inference

AI Inference Fundamentals

Efficiently serving Large Language Models (LLMs) requires specialized techniques to overcome memory bottlenecks and maximize throughput.

KV Cache (Key-Value Cache)

In autoregressive decoding, each generated token depends on all previous tokens. To avoid recomputing the attention “keys” and “values” for every new token, they are stored in GPU memory.

- Large: Can take gigabytes for long sequences (e.g., ~1.7GB for a 13B model at 2048 tokens).

- Dynamic: Sizes change based on sequence length, leading to memory management challenges.

- The Problem: Traditional systems over-reserve memory for the maximum possible sequence length (Internal Fragmentation) or fail to reclaim gaps (External Fragmentation), losing 60-80% of actual GPU capacity.

Time to First Token (TTFT)

TTFT is the latency between request submission and the first output token. It is the most critical metric for interactive user experience.

Prefill Phase (Compute-Bound)

The model processes the entire input prompt at once to populate the KV cache. This phase is limited by the GPU’s TFLOPS (compute capacity).

Decoding Phase (I/O-Bound)

Tokens are generated one by one. Each step requires loading the model weights and the KV cache from VRAM to the processors. This phase is limited by Memory Bandwidth.

[!TIP] Optimizing TTFT involves minimizing queuing delays and using efficient “Chunked Prefill” to balance prompt processing with ongoing token generation.

Last updated: 2026-03-25

vLLM & PagedAttention

vLLM is a high-throughput LLM serving engine. Its “secret sauce” is PagedAttention, an algorithm inspired by virtual memory paging in operating systems.

The PagedAttention Mechanism

Instead of allocating contiguous memory for a sequence’s KV cache (which leads to fragmentation), PagedAttention partitions it into fixed-size physical blocks.

Logical vs. Physical Mapping

Contiguous logical blocks of a sequence are mapped to non-contiguous physical blocks via a Block Table. Physical blocks are allocated strictly on demand.

Animation showing how logical KV cache blocks are mapped to non-contiguous physical memory.

Animation showing how logical KV cache blocks are mapped to non-contiguous physical memory.

The PagedAttention kernel fetches blocks efficiently by consulting the Block Table during computation.

The PagedAttention kernel fetches blocks efficiently by consulting the Block Table during computation.

Memory Sharing & Copy-on-Write

PagedAttention naturally enables efficient memory sharing for complex sampling algorithms (e.g., parallel sampling, beam search).

- Shared Prompt: Multiple output sequences from the same prompt can point to the same physical blocks.

- Copy-on-Write (CoW): When a shared block needs to be modified, a new physical block is allocated only for the delta.

Sharing the prompt’s KV cache across multiple generation sequences.

Sharing the prompt’s KV cache across multiple generation sequences.

[!NOTE] vLLM reduces memory waste to under 4%, allowing for significantly larger batch sizes and up to 24x higher throughput than standard Transformers implementations.

Last updated: 2026-03-25

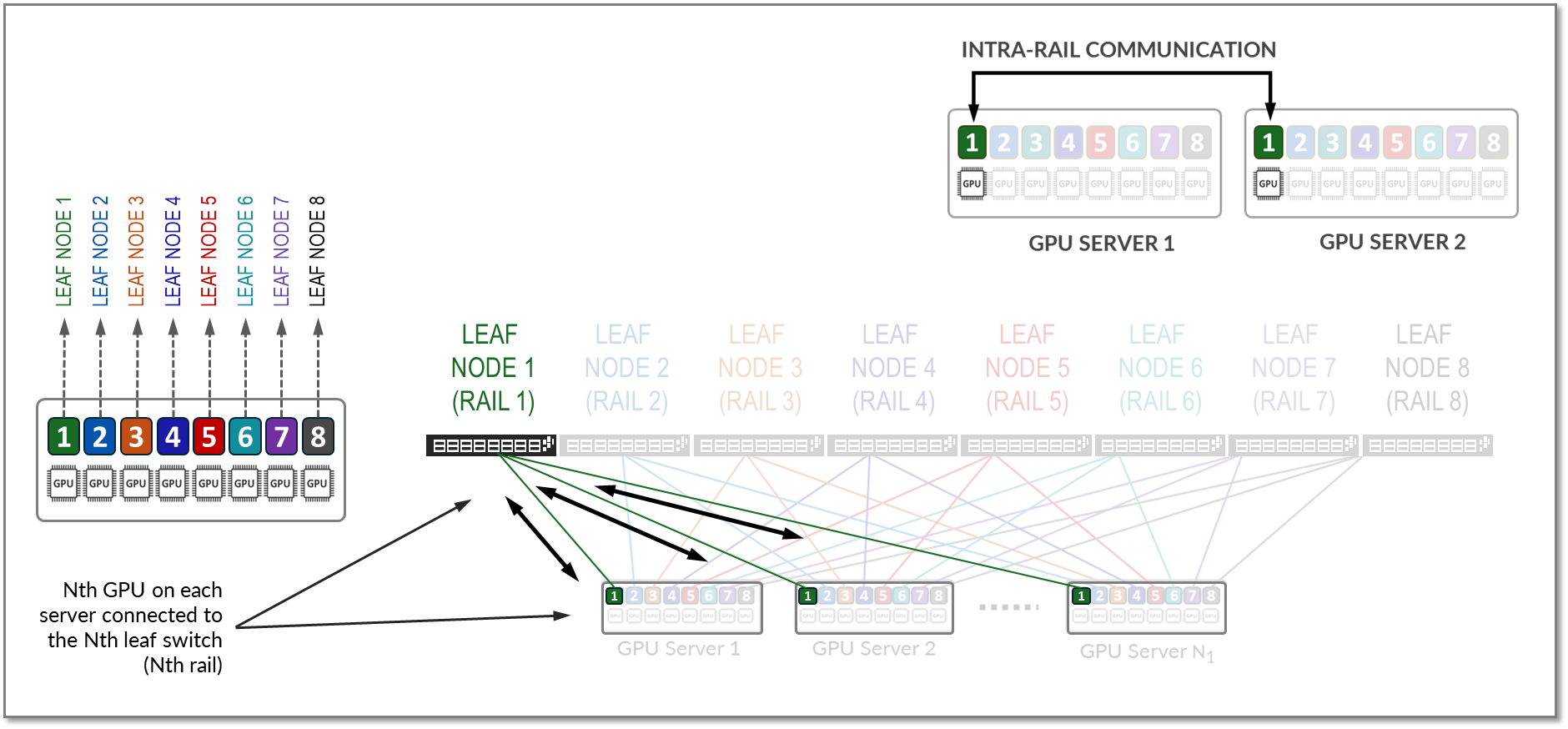

Inference Parallelism

When a model is too large for a single GPU or when scaling throughput is required, various parallelism strategies are employed.

Tensor Parallelism (TP)

Shards model weights (tensors) across multiple GPUs within a single layer.

- Scope: Usually within a single node (using high-speed NVLink).

- vLLM Config:

--tensor-parallel-size 4

Pipeline Parallelism (PP)

Distributes different layers of the model across different GPUs.

- Scope: Can span multiple nodes.

- vLLM Config:

--pipeline-parallel-size 2

Data Parallelism (DP)

Replicates the entire model across multiple GPU sets. Each set processes a different batch of requests.

- Best for: Maximizing overall system throughput.

Expert Parallelism (EP)

Used for Mixture-of-Experts (MoE) models (like DeepSeek or Mixtral). It shards the “expert” layers across GPUs while keeping common layers replicated or sharded via TP.

Last updated: 2026-03-25

Distributed Inference Tools

Modern stacks extend beyond simple model servers to include Kubernetes-native orchestration and intelligent routing.

KubeAI

A Kubernetes operator designed to streamline LLM deployments.

- OpenAI Compatible: Seamlessly integrates with existing LLM apps.

- Autoscaling: Supports “Scale-to-Zero” for cost savings.

- Prefix-Aware Routing: Directs requests to pods that already have the relevant KV cache.

- KubeAI.org

LLM-D (LLM Deployer)

A high-performance stack focusing on Disaggregated Serving.

PD Disaggregation

Separates the Prefill (prompt processing) and Decode (token generation) stages into distinct clusters.

- Prefill Clusters: Optimized for high-compute (TFLOPS).

- Decode Clusters: Optimized for high memory bandwidth and low latency.

Tiered KV Caching

LLM-D supports offloading KV-cache entries to:

- CPU RAM: Fast retrieval for warm requests.

- SSD: Persistent storage for long-tail cache.

- Network Storage: Shared cache across nodes.

Last updated: 2026-03-25

Concepts

DevOps: CI/CD Fundamentals

CI/CD Fundamentals

Automation is the engine behind DevOps. CI/CD pipelines provide a reliable, repeatable path for software to move from a developer’s machine to the end-user.

Continuous Integration (CI)

CI focuses on the early stages of the development cycle, ensuring that code changes are integrated and tested frequently.

The CI Workflow

- Code Commit: Developers push code to a shared repository (Git).

- Automated Build: The build server (GitHub Actions, GitLab CI, Jenkins) compiles the code and builds artifacts (Docker images, binaries).

- Static Analysis: Tools like SonarQube or checkstyle analyze code for security vulnerabilities and style issues.

- Testing:

- Unit Tests: Testing individual functions/classes.

- Integration Tests: Testing interactions between components.

- Security (SAST): Scanning source code for vulnerabilities.

Continuous Delivery vs. Deployment (CD)

While often used interchangeably, there is a key distinction in the level of automation.

Continuous Delivery

The code is always in a deployable state. However, the final push to production requires a manual trigger.

- Promotion: Promoting artifacts through staging/QA environments before production.

- Why?: Business requirements, compliance, or risk management.

Continuous Deployment

Every change that passes the automated pipeline is automatically deployed to production.

- Prerequisite: Extremely high confidence in automated testing and observability.

- Benefit: Minimum time-to-market and rapid feedback loops.

Pipeline Design Best Practices

- Build Once, Deploy Many: The same artifact (Docker image) should move through all environments to ensure consistency.

- Fail Fast: Run the fastest, most critical tests first to provide immediate feedback.

- Immutable Artifacts: Never modify an artifact after it’s built; version it and promote it.

- Artifact Management: Use registries like Harbor, Nexus, or JFrog Artifactory to store and version your builds.

| Stage | Goal | Tool Examples |

|---|---|---|

| Source | Version control | Git, GitHub, GitLab |

| Build | Compilation & Packaging | Maven, Go Build, Docker |

| Test | Quality & Security | Jest, JUnit, SonarQube |

| Release | Artifact storage | Harbor, ECR, Nexus |

| Deploy | Orchestration | Kubernetes, Helm, Terraform |

Last updated: 2026-03-25

Concepts

DevOps: Deployment Strategies

Deployment Strategies

Modern software delivery requires strategies that minimize downtime and blast radius. Beyond standard rolling updates, progressive delivery techniques allow for safer, metrics-driven releases.

Core Strategies

Blue/Green Deployment

Two identical environments (Blue=Stable, Green=New).

- Traffic Shifting: Managed at the load balancer or DNS level.

- DB Migrations: The biggest challenge. Strategies include:

- Expand and Contract: First add new columns (expand), then deploy code that uses both, then remove old columns (contract).

- Read-only mode: Briefly put the app in read-only during the switch.

- Pros: Instant rollback by switching back to Blue.

Canary Deployment

Incremental traffic shifting.

- Header-based Routing: Route only internal users or specific regions using HTTP headers (e.g.,

x-user-type: beta). - Automated Analysis: Tools like Argo Rollouts or Flux Flagger automatically compare metrics (Success Rate, Latency) between stable and canary.

- Rollback: Automatically triggered if error rates exceed a threshold.

Rolling Update

The default Kubernetes strategy.

- maxSurge: How many extra pods can be created during the update.

- maxUnavailable: How many pods can be taken down during the update.

- Readiness Probes: Critical for ensuring traffic only hits “warm” and healthy instances.

Recreate

- Usage: When the application cannot handle two versions running simultaneously (e.g., exclusive file locks or complex singleton states).

- Downtime: Scaled by the speed of startup/shutdown.

Progressive Delivery Tools

- Argo Rollouts: A Kubernetes controller that provides advanced deployment capabilities (Blue/Green, Canary, Analysis).

- Istio/Linkerd: Service meshes that enable fine-grained traffic splitting (e.g., 99% vs 1%).

- Feature Flags: Decoupling deployment from release. Code is deployed but hidden behind a toggle (LaunchDarkly, Unleash).

| Strategy | Speed | Risk | Seamless | Complexity |

|---|---|---|---|---|

| Recreate | Fast | High | No | Low |

| Rolling | Slow | Medium | Yes | Low |

| Blue/Green | Fast | Low | Yes | High |

| Canary | Slow | Lowest | Yes | High |

Last updated: 2026-03-25

Concepts

DevOps: IaC & GitOps

Infrastructure as Code (IaC) & GitOps

Treating infrastructure like software is the cornerstone of modern DevOps. This ensures reproducibility, auditability, and speed.

Infrastructure as Code (IaC)

IaC allows teams to manage and provision infrastructure through code rather than manual processes.

Key Concepts

- Declarative vs Imperative:

- Declarative: Focuses on the desired state (e.g., “I want 3 VMs”). Examples: Terraform, OpenTofu, CloudFormation, Pulumi.

- Imperative: Focuses on the steps to achieve the state (e.g., “Run this script to install Nginx”). Examples: Ansible, Chef, Puppet.

- Idempotency: The ability to run the same code multiple times and achieve the same result without unintended side effects.

- State Management: Tools like Terraform maintain a

.tfstatefile to track the real-world resources and map them to your code.

Terraform Deep Dive

- Providers: Plugins that interact with cloud APIs (AWS, GCP, Kubernetes).

- Modules: Reusable building blocks to standardize infrastructure patterns.

- Backends: Remote storage for state files (S3, GCS, Terraform Cloud) with locking mechanisms (DynamoDB) to prevent concurrent changes.

GitOps Principles

GitOps is an operational framework that takes DevOps best practices (version control, collaboration, CI/CD) and applies them to infrastructure automation.

The Four Pillars

- Declarative Description: The entire system is described declaratively in Git.

- Versioned Source of Truth: Changes to the system are made via Pull Requests.

- Automatically Pulled: The infrastructure is automatically updated when the Git state changes.

- Continuously Reconciled: Software agents (operators) constantly compare the desired state (Git) with the actual state (Cluster).

GitOps vs Traditional CI/CD

| Feature | Traditional CD (Push) | GitOps (Pull) | | :— | :— | :— | | Trigger | CI server pushes to Cluster | Cluster agent pulls from Git | | Security | CI needs cluster credentials | Agents run inside the cluster | | Drift | Hard to detect | Automatically corrected |

Tools

- ArgoCD: Provides a powerful UI and supports multi-cluster management.

- Flux CD: A lightweight, CNCF-graduated tool focused on automation and security.

- Sealed Secrets / External Secrets: Strategies to manage sensitive data in Git without storing plan-text secrets.

| Tool | Focus | Philosophy |

|---|---|---|

| Terraform | Infrastructure Provisioning | Generic, multi-cloud |

| Ansible | Configuration Management | Procedural, agentless |

| ArgoCD | Kubernetes CD | GitOps, UI-driven |

Last updated: 2026-03-25

Concepts

DevOps: Git Internals

Git Internals & Advanced Config

Git is a content-addressable filesystem. Understanding how it moves data between its internal areas is key to mastering the tool.

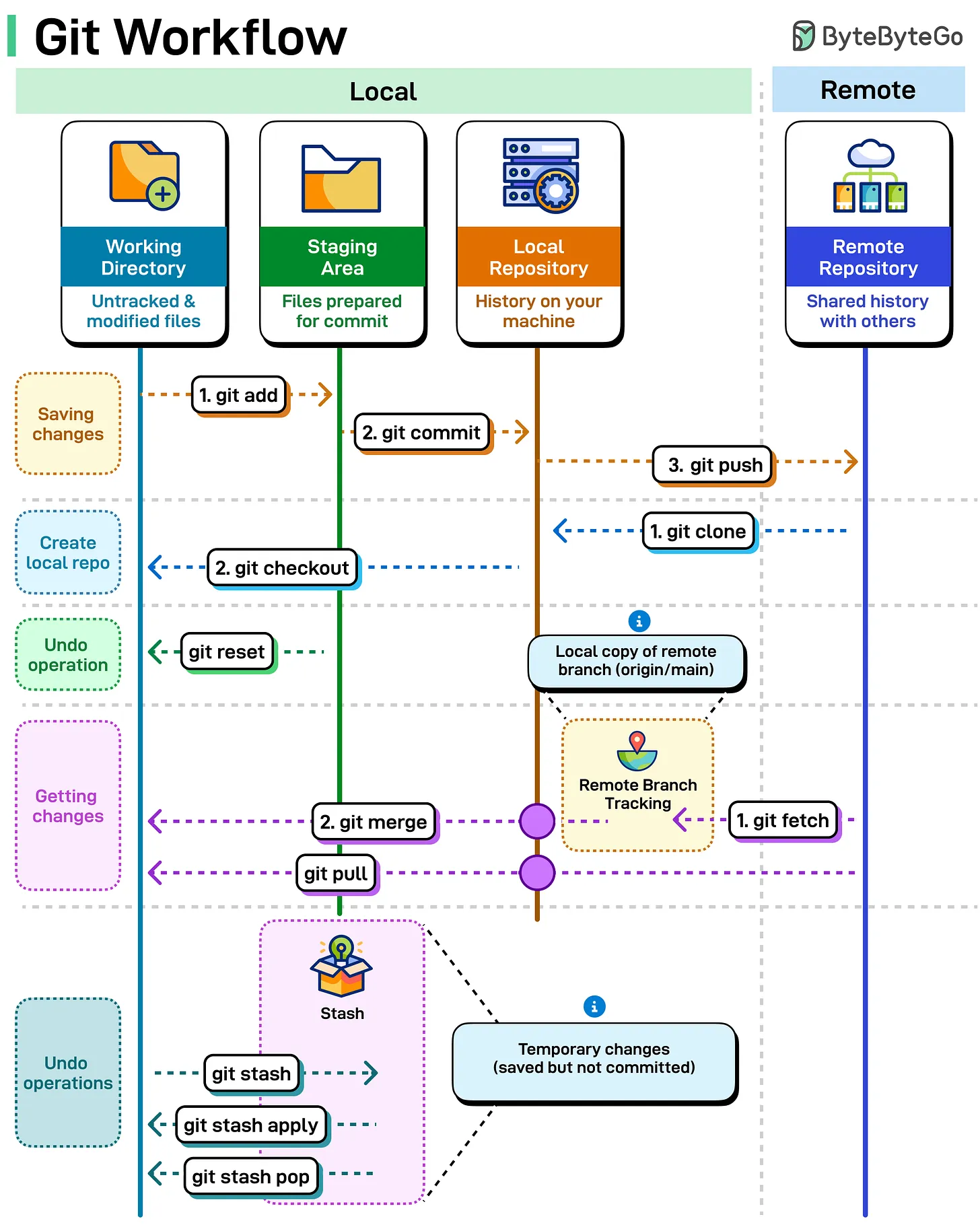

The Git Workflow (4 Areas)

Git manages your code across four distinct areas. Most commands are simply moving data between these stages.

- Working Directory: Your local files on disk that you are currently editing.

- Staging Area (Index): A “draft” area where you prepare changes for the next commit.

- Local Repository (HEAD): Your personal version history on your machine.

- Remote Repository: The shared version of the project (e.g., GitHub, GitLab).

Essential Commands

git add: Moves changes from Working Directory to Staging Area.git commit: Saves staged changes to the Local Repository.git push: Uploads local commits to the Remote Repository.git fetch: Downloads updates from Remote to Local Repository (without merging).git merge: Integrates downloaded changes into your current branch.git pull: Performsfetch+mergein a single step.git checkout: Switches between branches or restores files.git stash: Temporarily “shelves” changes in the Working Directory to be restored later.

Visualizing the Data Flow

Advanced Configurations

These settings are frequently used by Git core developers to improve the default experience, focusing on better diffing, pushing, and conflict resolution.

Better Diffing & Visibility

# Use the smarter histogram diff algorithm

git config --global diff.algorithm histogram

# Highlight moved code in different colors

git config --global diff.colorMoved plain

# Show the full diff when writing commit messages

git config --global commit.verbose true

Streamlined Pushing & Fetching

# Automatically set upstream branch on first push

git config --global push.autoSetupRemote true

# Automatically prune stale remote-tracking branches on fetch

git config --global fetch.prune true

# Push tags automatically when pushing branches

git config --global push.followTags true

Conflict Resolution & Maintenance

# Show the "base" version in merge conflicts (Zealous Diff3)

git config --global merge.conflictstyle zdiff3

# Reuse recorded resolutions (rerere) for repeating conflicts

git config --global rerere.enabled true

git config --global rerere.autoupdate true

# Default to rebase when pulling

git config --global pull.rebase true

# enable filesystem monitor for faster status in large repos

git config --global core.fsmonitor true

Safety & Automation

# Guess and prompt for autocorrecting mistyped commands

git config --global help.autocorrect prompt

# Automatically stash/pop changes before/after rebase

git config --global rebase.autoStash true

Sources:

Last updated: 2026-03-25

Concepts

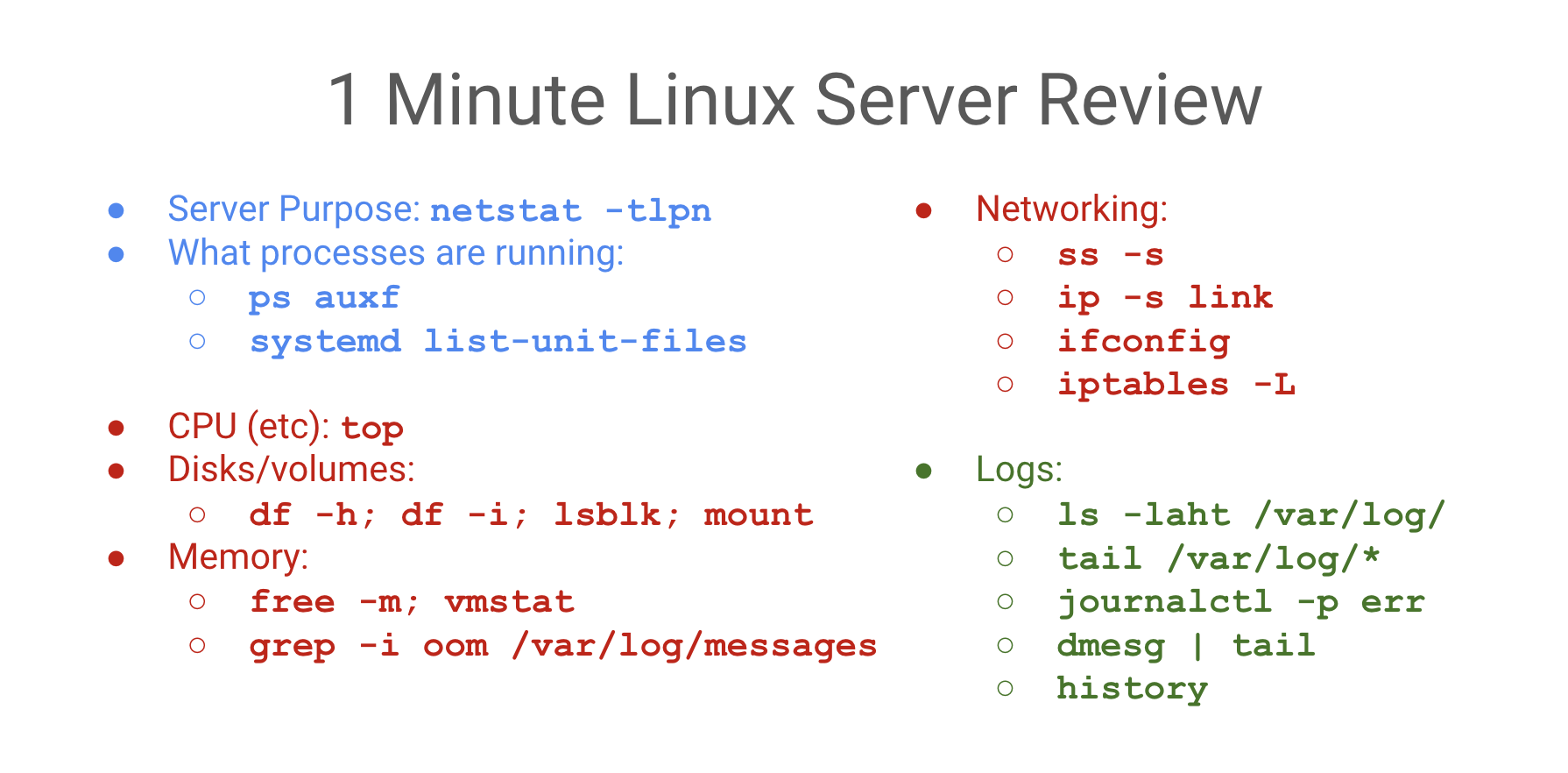

DevOps: Linux Fundamentals

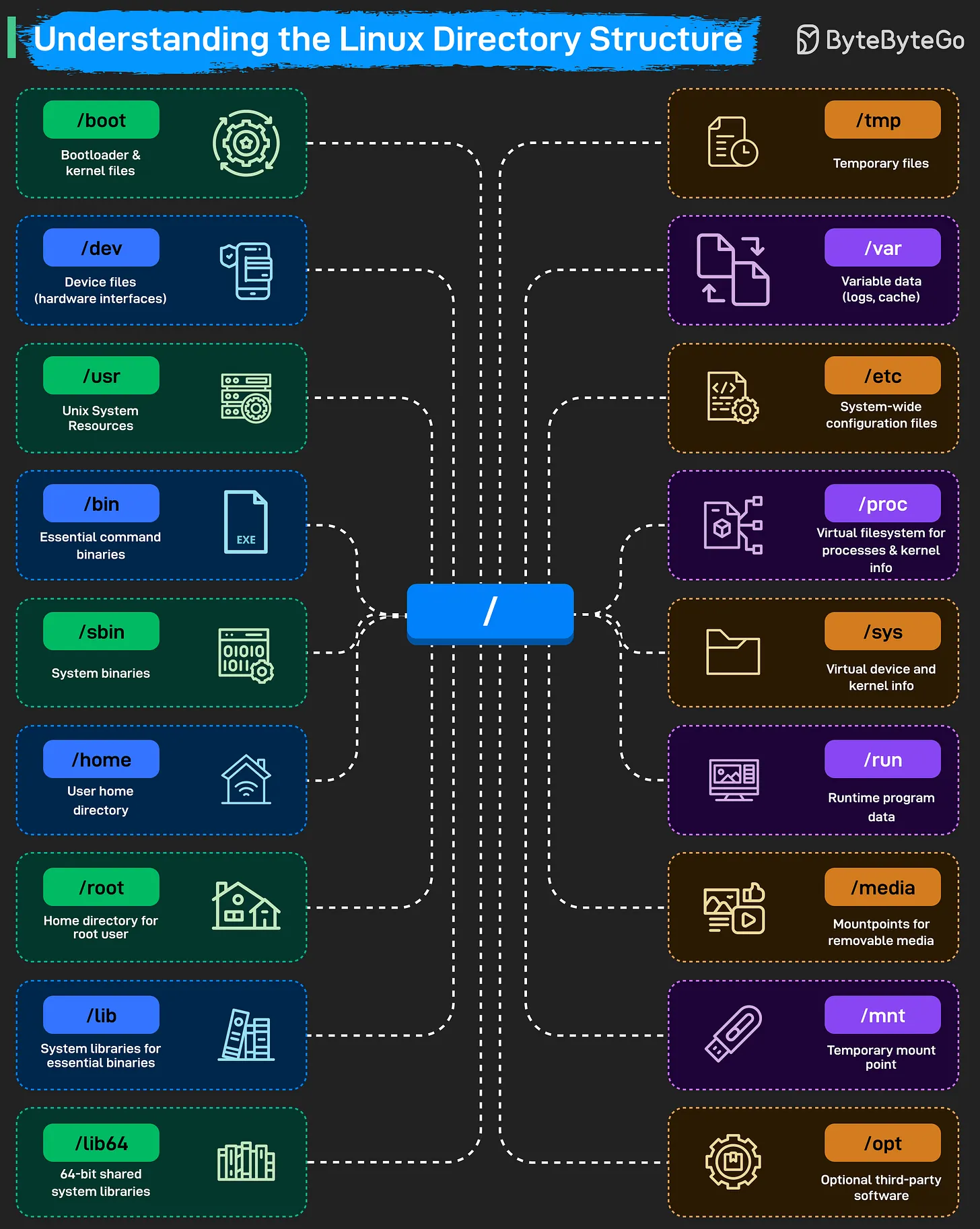

Understanding the Linux Directory Structure

The Linux filesystem follows a hierarchical structure, starting from the root directory /. Everything in Linux—including hardware devices, processes, and system configurations—is represented as a file within this tree.

The Linux Filesystem Hierarchy

Core System Directories

/(Root): The starting point of the entire filesystem. Every other directory is a child of root./boot: Stores the bootloader (e.g., GRUB) and kernel files. The system cannot start without this directory./bin&/sbin: Contain essential binaries and system commands./binholds commands for all users, while/sbinholds system administration binaries./lib&/lib64: System libraries that support the binaries in/binand/sbin.

Configuration & Data

/etc: The central location for all system-wide configuration files./home: Contains personal directories for regular users (e.g.,/home/alice)./root: The home directory for the root (superuser) account./var: Stores “variable” data that changes frequently, such as logs (/var/log), caches, and spool files./tmp: A place for temporary files, which are often cleared on reboot.

Resources & Applications

/usr: Contains user-level applications, libraries, and source code. It is often the largest directory on the system./opt: Reserved for “optional” or third-party software packages (e.g., Chrome, Zoom)./run: Records runtime information for programs since the last boot (e.g., PID files).

Hardware & Virtual Filesystems

/dev: Holds device files that act as interfaces to hardware (e.g.,/dev/sdafor a disk)./proc: A virtual filesystem that provides information about running processes and kernel parameters./sys: Another virtual filesystem that exposes kernel information about hardware devices and drivers./media&/mnt: Used for mounting external storage./mediais typically for auto-mounted removable devices (USB, CD-ROM), while/mntis for manual temporary mounts.

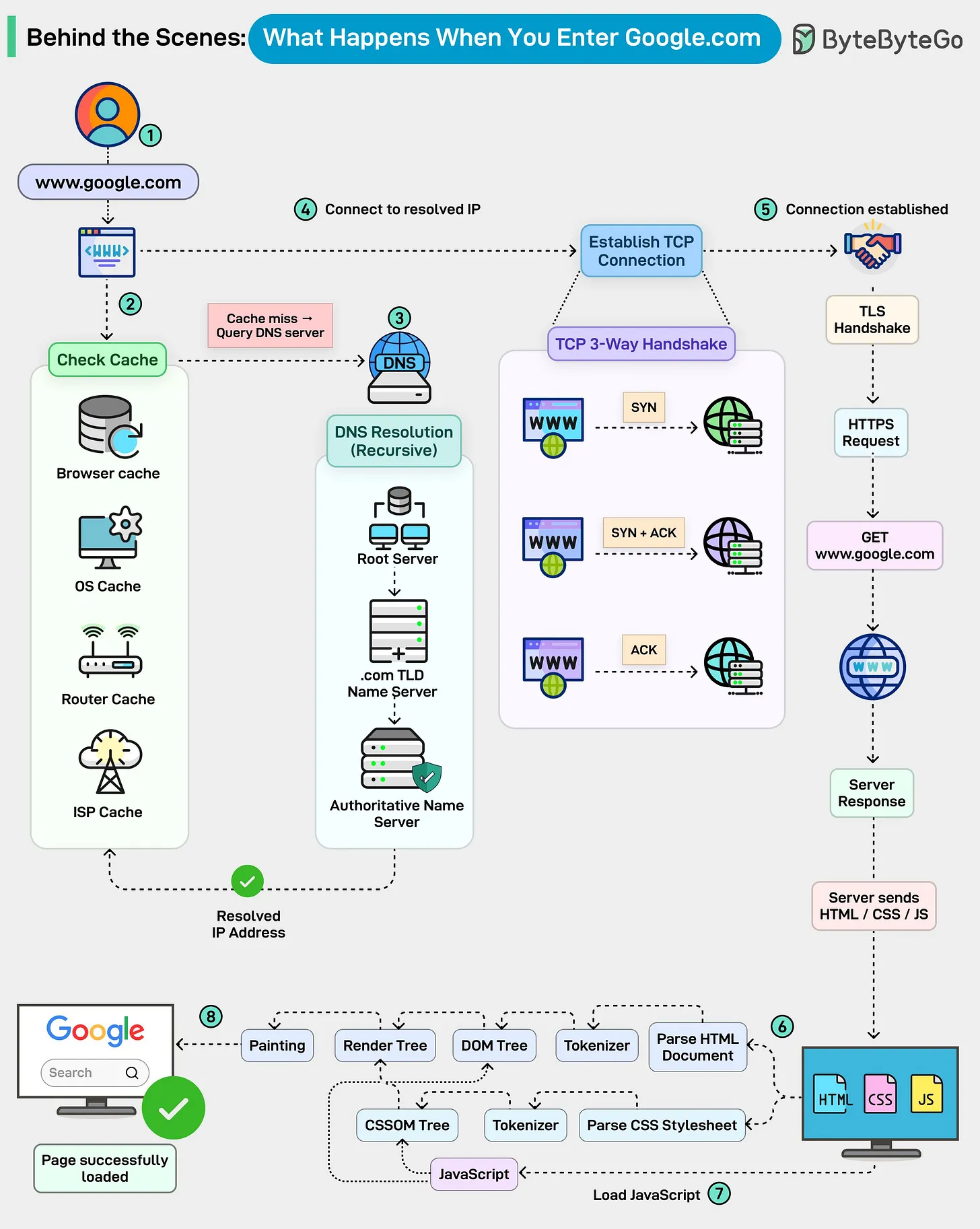

Source: ByteByteGo - Understanding the Linux Directory Structure

Last updated: 2026-03-25

The Linux Boot Process Explained